A recent study by a pair of Oxford professors estimated that nearly half of all workers in the United States could eventually lose their jobs due to automation. While journalists have been faced with round after round of layoffs in recent years as a result of the Internet’s decimation of newspapers’ traditional business models, the fear that robots might take their jobs probably isn’t even on most reporters’ radar.

But maybe it should be.

According to a study published late last month in Journalism Practice, journalists might want to start looking over their shoulders for the algorithmic cub reporters that are eventually going to take their beats.

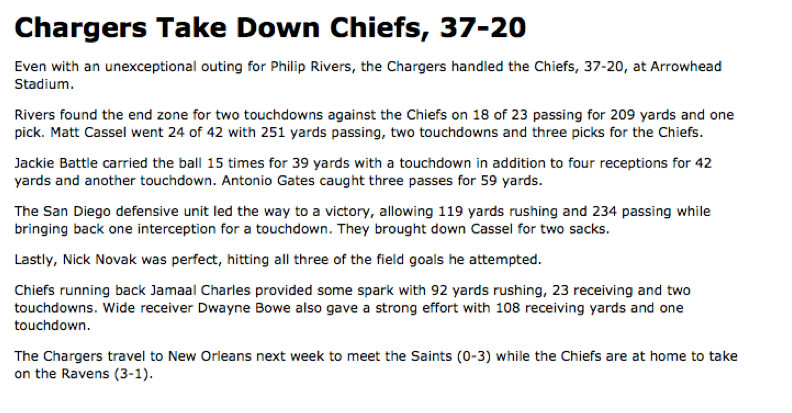

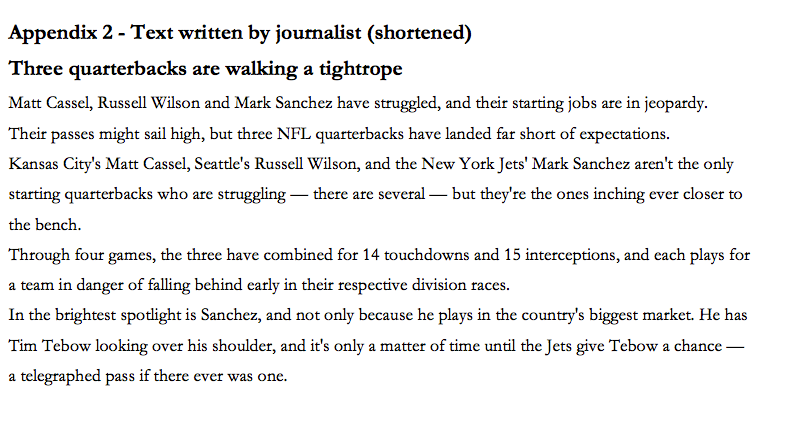

The goal of the study, titled ‟Enter the Robot Journalist,” was to determine whether readers are able to differentiate between news content created by a software program and content written by a flesh-and-blood journalist. Study author Dr. Christer Clerwall of Sweden’s Karlstad University gave a group of students in an undergraduate web production course two articles recapping the same NFL football game.

The first article was produced by a piece of software called Automated Insights:

The second was written by a human working for the Los Angeles Times (although this article was shortened in order to match the length of the one written by the computer program):

Clerwall writes of test subjects’ feedback: ‟[T]he software-generated content … [was] perceived as, for example, descriptive, boring and objective, but not necessarily discernible from content written by journalists.”

The students said that they found the article written by the computer significantly more informative and trustworthy than the one written the journalist; however, they noted that it was far less pleasant to read.

‟Perhaps the most interesting result in the study is that there are [almost] no … significant differences in how the two texts are perceived by the respondents,” Clerwall wrote. ‟The lack of difference may be seen as an indicator that the software is doing a good job, or it may indicate that the journalist is doing a poor job – or perhaps both are doing a good (or poor) job?”

The scope of this study was relatively small—only a single article and, at that, the type of article that can be assembled relatively easily by combining pieces of data produced in all football games. Automating the creation of a New Yorker think piece or an in-depth profile of an up-and-coming political figure is likely a far more difficult task for a computer program to do convincingly.

While Clerwall explains that switching out real, live journalists for automated ones could allow news organizations to save money (by employing fewer journalists), he insists the prevalence of automated articles could actually help reporters do better work in the long run. ‟How automated content may influence journalism and the practice of journalism is a quite open question,” Clerwall writes. ‟An optimistic view would be that automated content will free resources that will allow reporters to focus on more qualified assignments, leaving the descriptive ‛recaps’ to the software.”

A number of companies looking to automate journalism have popped up in recent years. The most high-profile is the Chicago-based Narrative Science, which functions as a platform that media outlets can use to automate reporting. According to an article about Narrative Science in the Atlantic, some of the company’s biggest clients are Forbes, which uses the platform to automatically create profiles of well-performing companies based on earnings and stock market data, and the Big Ten Network, which does post-game wrap-ups drawn from scores and player statistics.

The real question, however, should be rest in the mind of the consumer. In the next article you read, you may have a creeping suspicion that it was written by a robot. What about the one you’re reading right now? Was it written by a human being?

Photo by Mirko Tobias Schaefer/Flickr