If lately Facebook feels a little bit smarter—or creepier—it’s probably not just you. On stage at the company’s F8 developer conference, Facebook decided to give attendees a bit of a peek behind the curtain of its elusive News Feed formula that powers what all users see when they visit the site, and it’s more complicated (and more omniscient) than ever.

During a question-and-answer session, one audience member asked if it was a coincidence that his own News Feed began surfacing stories from a friend who had recently contacted him via Facebook’s mobile Messenger app:

“The fact that you are using Messenger and talking to [a] person and talking to them is also something we’ll take into account,” Facebook’s Adam Mosseri explained.

“If you haven’t talked to somebody for a long time and then all of the sudden you start talking to them a bunch on Messenger, that’s actually an important signal that goes into [News Feed] ranking… and we’ll show you that person’s content.”

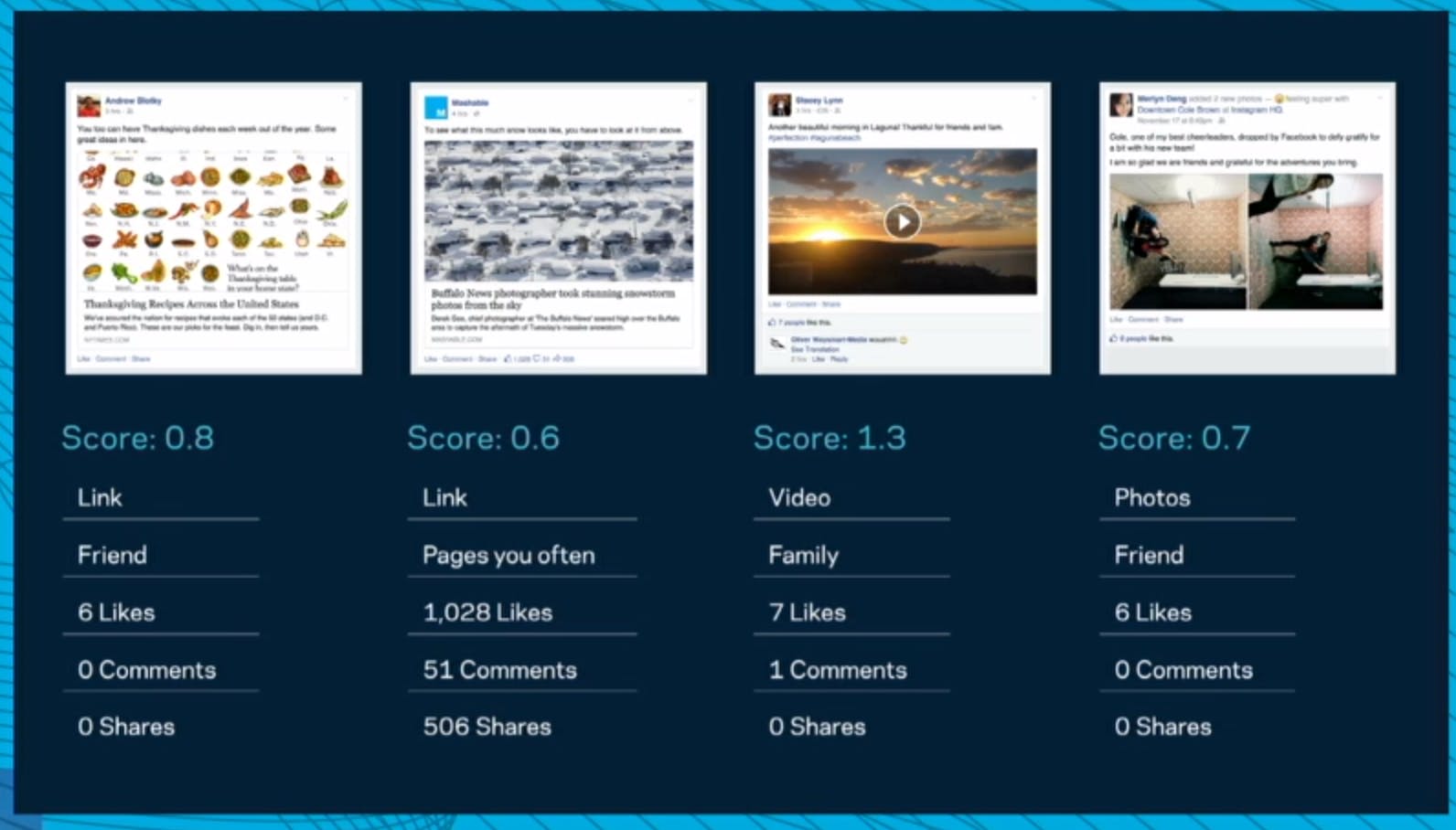

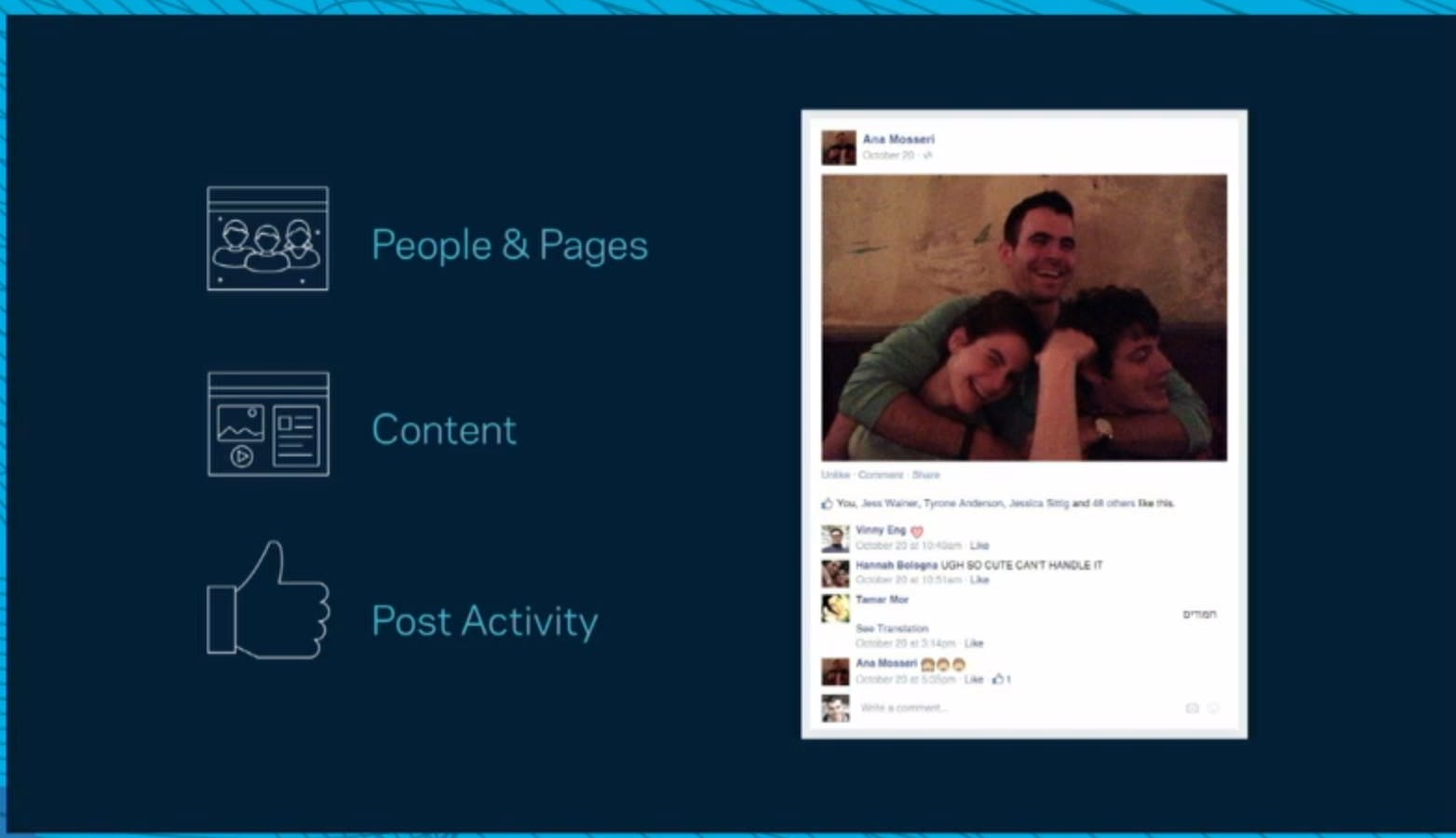

Facebook also looks at location tags on photos and posts, rolling that geodata into its massive collection of what it calls “ranking signals.” The most powerful of these signals are the relationship you have with other users (Facebook might show you more content from your wife, for example), the type of content (if you watch a lot of videos, Facebook will serve you more videos), and the other activity on a post (a New York Times story with 2,000 likes would have better odds of showing up than one with only 10).

Facebook’s News Feed team insists that it is going to work toward increasing transparency about how Facebook chooses the custom streams of content that populate each user’s News Feed. Facebook goes to great lengths to decide what you see on its social news merry-go-round, but that process is mostly opaque to its users. On stage, Facebook Chief Product Officer Chris Cox described how the company weeds out “the stuff that you think is lame or boring.”

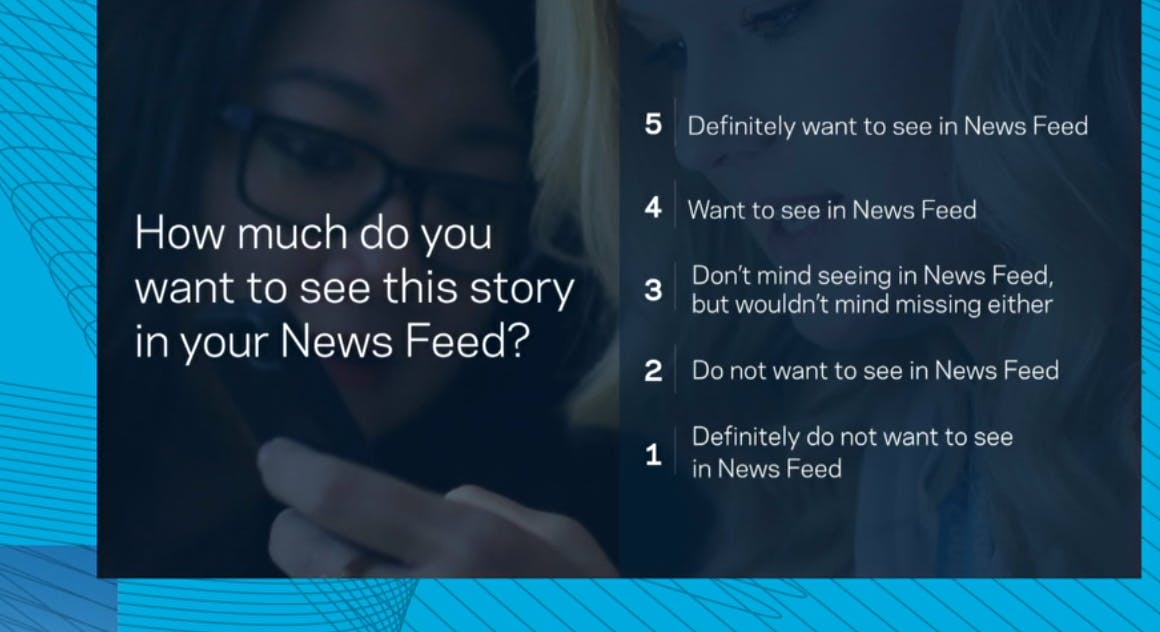

Beyond its mostly secret and ever-changing algorithms, Facebook has a team dedicated to studying signals from users about what kind of content they want to see. According to Cox, while clicks and comments have traditionally been the main way to evaluate what users want to see, the team is “moving to something more human” by relying on Facebook users who are actually paid to interact with and rate content on the social platform.

“When you first sign up for Facebook, it’s completely empty,” Mosseri described. But the way Facebook is choosing to populate the News Feed is quickly becoming smarter and more sophisticated. The News Feed team described how its human-powered feedback teams—comprised of people from “from broad walks of life”—actually rate content instead of just engaging with what looked interesting. Through that sort of quality-oriented feedback, Facebook realized that just because someone clicks through a “low quality” clickbait-style story doesn’t mean they liked that experience.

That’s why they ask a team of paid users to rate how much they wanted to see a given story in their feed on a scale of one (“definitely do not want to see in News Feed”) to five (“definitely want to see in News Feed”). “It’s a new program,” Backstrom said. “We’re actively iterating on it.”

“[We] want to get as many of the ‘five-star’ stories in the feed without missing any of them,” Facebook’s Lars Backstrom explained. This line of thinking is why Facebook recently shifted its algorithm to punish clickbait headlines and “hoaxes,” both of which fall into Facebook’s definition of low quality content.

Now, Facebook’s algorithm looks at three big factors to determine what to show you and what to bury. According to Mosseri, if you scrolled for long enough, you’d eventually see every single thing your friends posted, though the same doesn’t go for brands promoting content on Facebook.

“Our goal is to align our ranking as best as we possibly can with what people are telling us is interesting and relevant to them.” True to Facebook’s old “move fast and break things” motto, the Sisyphean task of deciding what shows up on the News Feed is changing all the time—and it’s certainly getting smarter.

Illustration by Max Fleishman