It’s no secret that Instagram has a bullying problem. A recent survey from anti-bullying group Ditch the Label canvassed over 10,000 U.K. teens and revealed that out of all the young people who said they experienced cyberbullying, 42 percent said it took place on Instagram.

More worryingly, there have been tragic teenage suicides thought to have ties to Instagram bullying.

Now, the social network is making changes to try and address the issue. “We can do more to prevent bullying from happening on Instagram,” Adam Mosseri, Head of Instagram said in a statement. “And we can do more to empower the targets of bullying to stand up for themselves.”

Instagram is rolling out a series of new features to better protect users from bullying and negative comments. Here’s what we know so far about the upcoming tools.

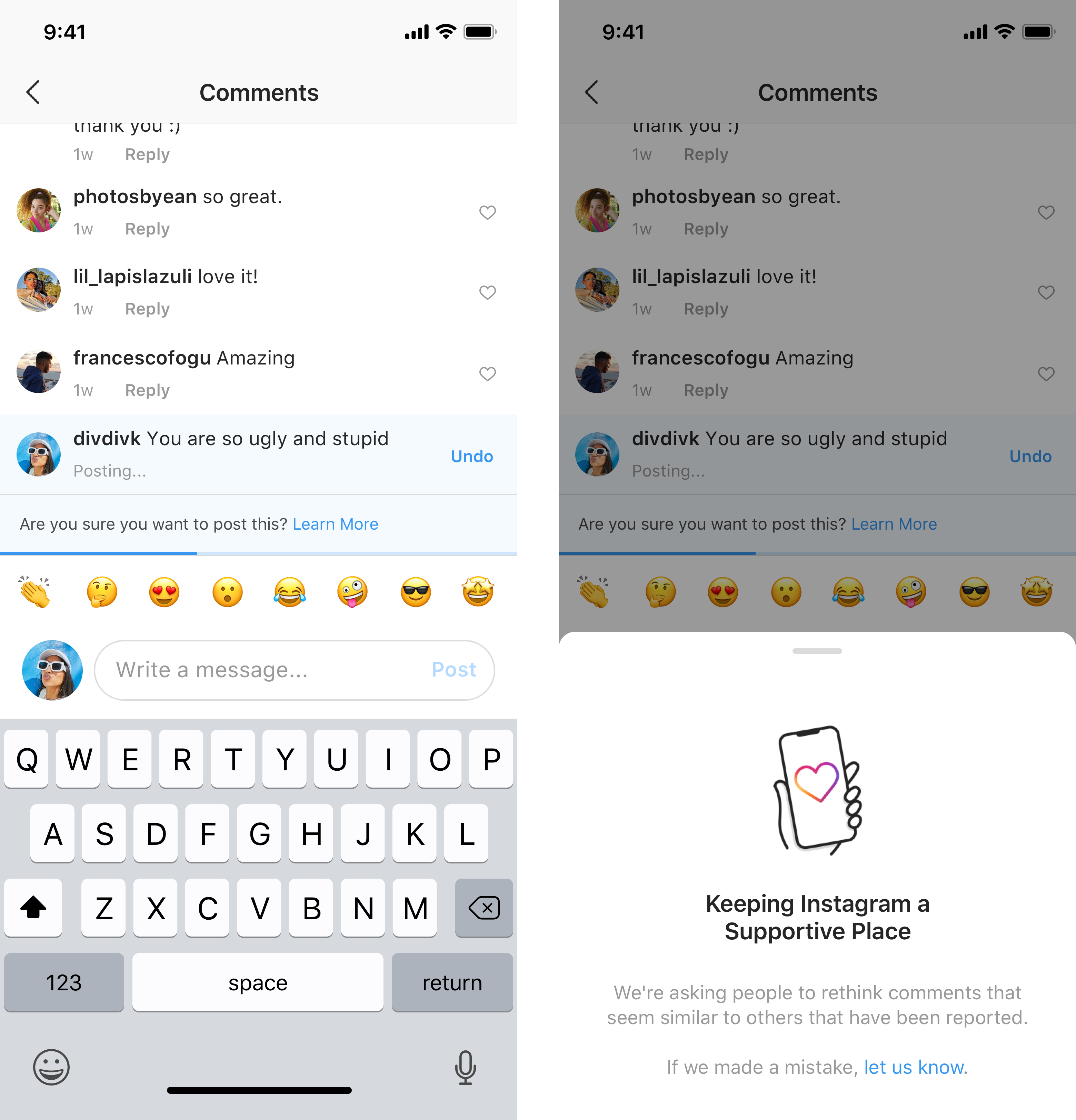

Negative comment alerts

Instagram has always used algorithms to try and detect harmful or hurtful comments, but it’s taking that a step forward with new functionality.

Using artificial intelligence, a new automated feature claps back at a potentially negative commenter by alerting them to the fact their comment could be considered offensive before they post it.

This gives the commenter the chance to reconsider posting. “From early tests of this feature, we have found that it encourages some people to undo their comment and share something less hurtful once they have had a chance to reflect,” Mosseri explains.

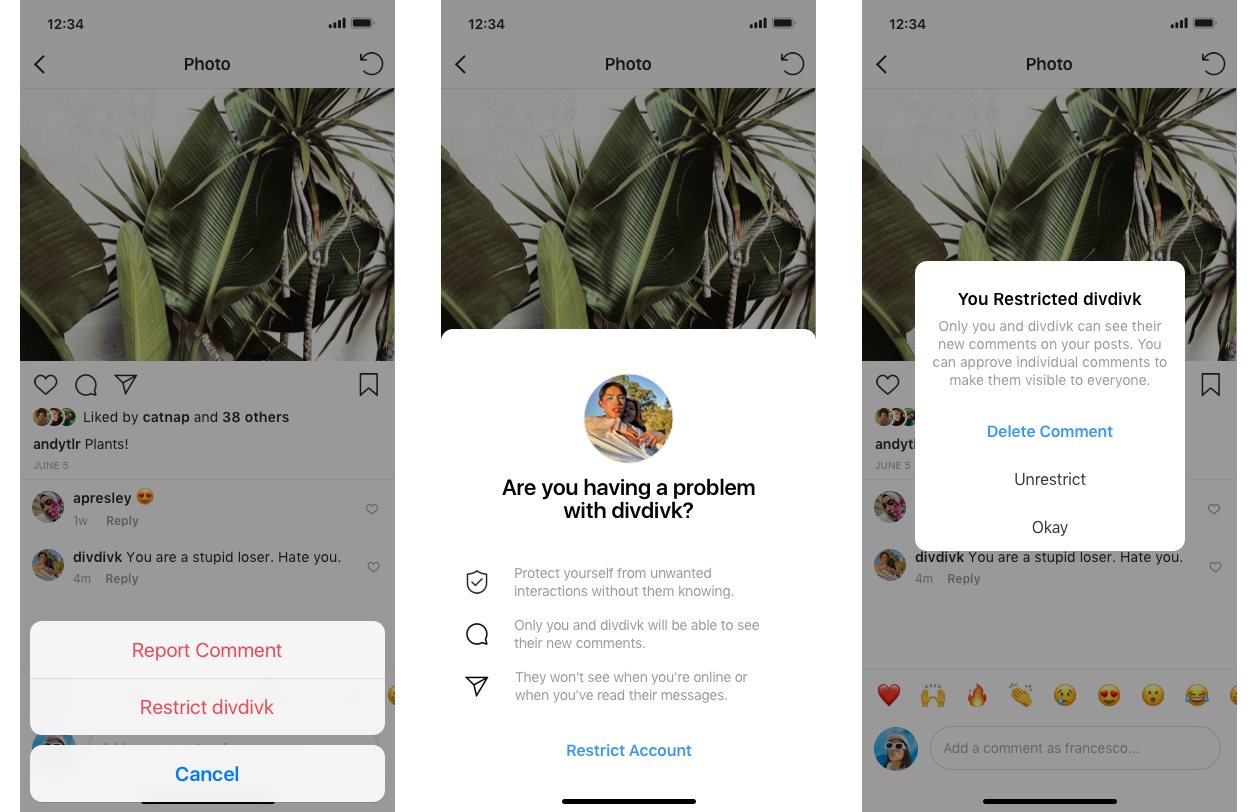

Restricting users

Another upcoming option Instagram announced is a new tool to restrict certain people’s access to your Instagram content and limit notifications.

Instagram explains that users who’ve experienced bullying on its platform aren’t always comfortable blocking, unfollowing, or reporting their bullies–especially if they know the users in real life.

Instagram is tackling that with a “restrict” feature. If a user chooses to restrict an abusive user (which that person will be unaware of), the bully’s comments on photos or videos will only be visible to the user. Their other Instagram friends won’t see the abuse.

If the comment isn’t offensive, users will have the option to approve the comment to make it visible again.

And anyone a user restricts won’t be able to see if they’ve read any direct messages from them and they won’t be able to see when their victim is actively using the platform.

Too little, too late?

It’s certainly a step in the right direction for Instagram to bring more safeguarding tools onboard, but there is more that needs to be done. Mosseri says he “look[s] forward to sharing more updates soon,” so it would seem that Instagram isn’t satisfied with its anti-bullying toolset quite yet.