Facebook’s QAnon problem is no secret—while other social media platforms have attempted to ban the conspiracy theory, Facebook has done little to curb its popularity. And a breakdown of Facebook’s trending posts from Tuesday shows the influence this conspiracy community has in shaping conversation on the site.

Every weekday, New York Times reporter Kevin Roose tweets a list of the ten posts with the highest interactions from U.S. Facebook pages. Usually, these trending lists are published without much commentary on a dedicated Twitter account. But on Tuesday, he took a step back to explain the stats, and how QAnon fits into them.

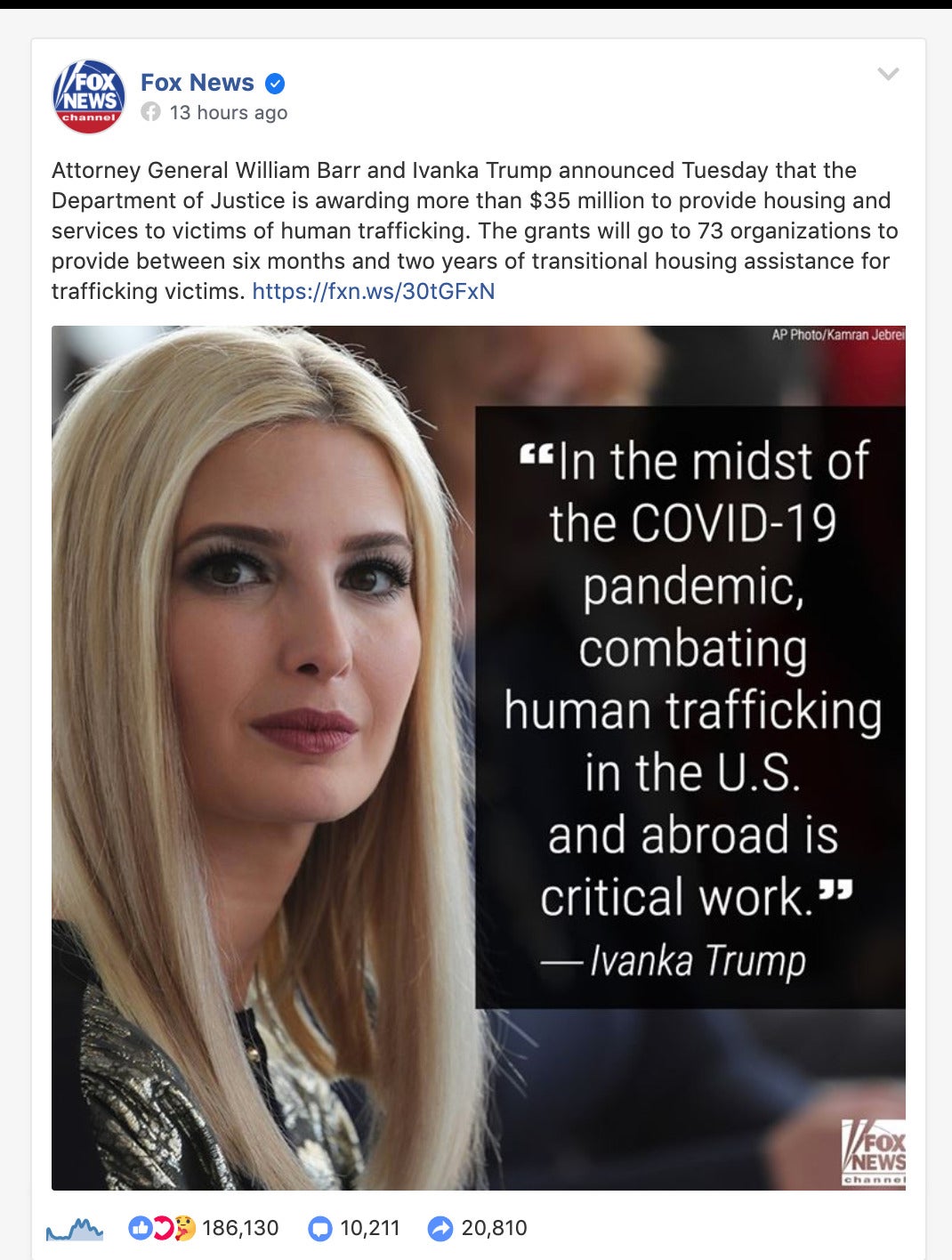

The trending list in question shows a post on President Donald Trump’s page had the most engagement that day, with the rest of the top ten including multiple posts from conservative commentator Ben Shapiro and Fox News. As Roose pointed out, though, those repeating sources weren’t the only overlap.

According to his analysis, the trending Facebook posts from President Trump, Ivanka Trump, and one from Fox News were all about the same topic: $35 million-plus in grant money the Justice Department is putting towards organizations aiding human trafficking survivors.

“Now, normally, you’d be surprised that a $35 million grant by an obscure federal agency would be the highest-performing story on Facebook,” Roose continued in his Twitter thread on the topic. “But people who follow this stuff know that stories about human trafficking, *especially* stories involving Trump, are a QAnon bat signal.”

The prevalence of trafficking, often involving children, within “elite” circles is a key tenant of the QAnon theory, as is the fact the Trump is fighting to stop it. So, you can see why this post would prove popular with the QAnon crowd—which it was, being shared on some of the larger conspiracy pages. Roose theorizes that it was also widely spread “user-to-user,” which helped it cinch those high-engagement spots.

“This is also why “banning QAnon” isn’t really possible. It’s in the bloodstream. Right-wing influencers know they’ll get huge engagement on posts about child trafficking, etc., and they can post them without fear of being censored. (Because, after all, it’s just a news story),” Roose wrote, pointing to the fact that it’s one thing to ban misinformation; it’s another to deal with this virulent misinterpretation.

For sites like Facebook and Twitter, which itself is currently struggling to curb QAnon as pledged, it’s further proof that removing conspiracy theories aren’t as easy as blocking a few phrases or deleting some accounts.

READ MORE:

- QAnon has an anti-Semitic theory behind the Beirut explosion

- ASU professor who ‘died’ of coronavirus never existed

- Where the loved ones of COVID-19 victims still gather in groups to grieve