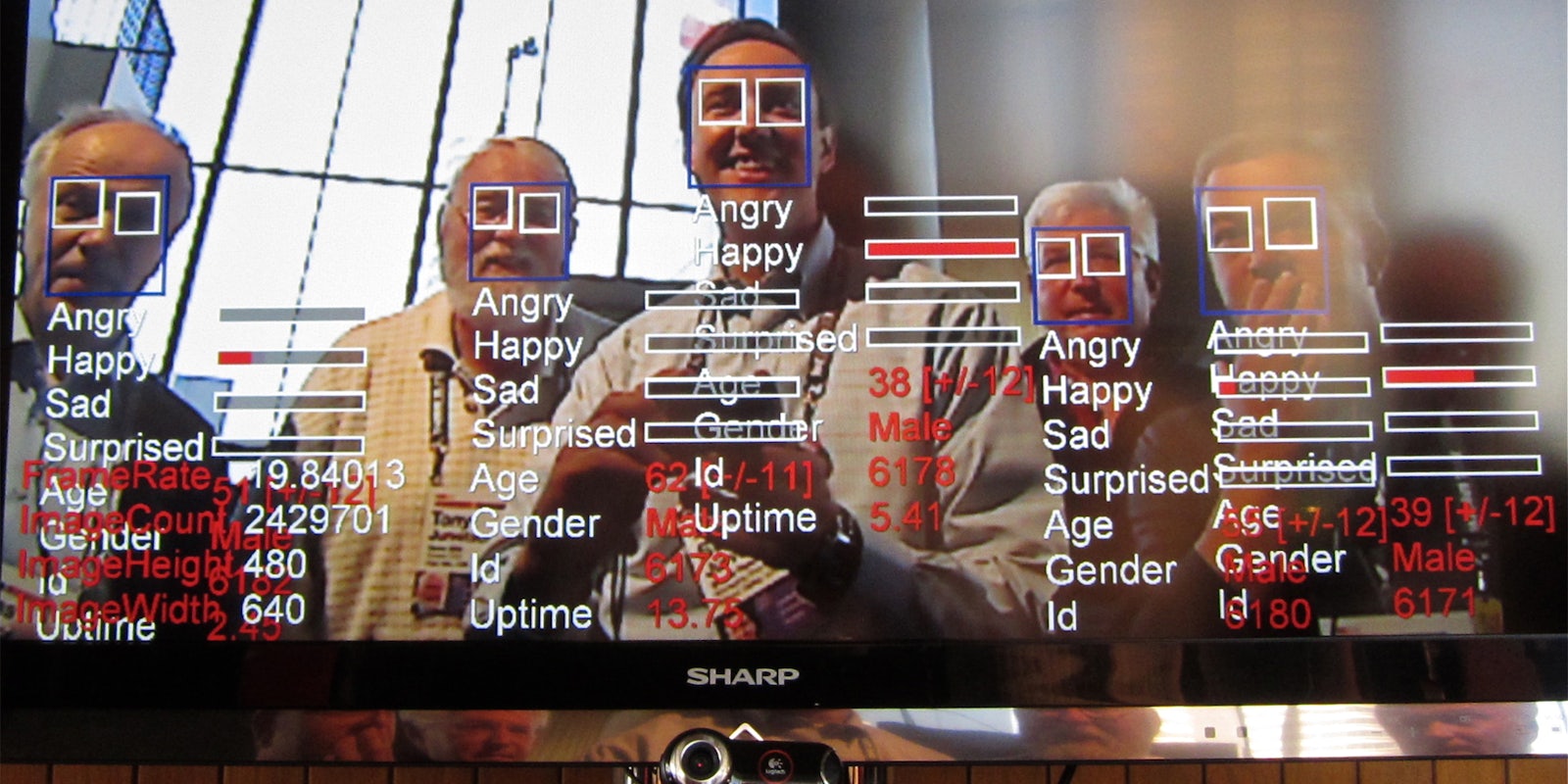

The power to have a computer sift through a crowd of faces on camera and pinpoint individuals is closer than ever to becoming a reality—but it’s not quite there yet.

A major document disclosure by the Department of Homeland Security (DHS) shows that the federal government is making progress in the development of surveillance technology that would use computers and video cameras to automatically scan crowds and identify people by their faces.

The DHS tested the Biometric Optical Surveillance System (a.k.a. BOSS) last fall, after two years of government-funded development, according to the New York Times. Although the system is not ready for use yet, documents and interviews with researchers show that significant advances are being made—much to the chagrin of privacy advocates.

The ability to automatically scan a crowd for those suspected of nefarious or criminal activity has long been a goal for law enforcement and security officials. It’s development even predates the 9/11 attacks, with an early version of the technology having been tested at the 2001 Super Bowl. But up until recently, the technology has been too unreliable and slow to be an effective surveillance tool.

But a Freedom of Information Request filed by the Electronic Privacy Information Center, shows that efforts to improve the technology were ratcheted up during the wars in Iraq and Afghanistan. The stated primary goal was to be able to better monitor polling places in those countries to prevent insurgent attacks. However, in 2010, the BOSS program was transferred to the DHS, so that the technology could be further enhanced for domestic security. Since then the federal government has spent $5.2 million, paid to independent contractor Electronic Warfare Associates, to improve the system.

The recent DHS disclosure does not say when this technology might be available for wide use, noting only that it’s not ready yet and development must continue. But independent experts in the field of facial recognition technology say it’s not far off.

“I would say we’re at least five years off, but it all depends on what kind of goals they have in mind” Michigan State University computer and biometrics engineering specialist Anil Jain told the Times.

There is certainly a demand among law enforcement officials in the U.S for this kind of technology, highlighted by the recent manhunt for the Boston Marathon bombing suspects. Law enforcement were able to identify the suspects by manually combing through a countless amount of photos and videos taken at the scene of the explosion. Facial recognition technology failed at the same task.

One of the major hurdles researchers still have to overcome with facial recognition technology is the pool of data from which the software can pull. ArsTechnica reports that the visual data isn’t always as clean as it is on TV crime shows.

“Despite advances in the technology, systems are only as good as the data they’re given to work with. Real life isn’t like anything you may have seen on NCIS or Hawaii Five-0. Simply put, facial recognition isn’t an instantaneous, magical process. Video from a gas station surveillance camera or a police CCTV camera on some lamppost cannot suddenly be turned into a high-resolution image of a suspect’s face that can then be thrown against a drivers’ license photo database to spit out an instant match.”

Along with video facial recognition, significant gains have already been made in the ability of law enforcement to scan faces in still photos taken under ideal conditions, such as passport pictures and mug shots. The FBI is spending $1 billion to create a Next Generation Identification network that will provide a national mugshot database for local police departments to use.

All of this is cause for consternation among privacy advocates who fear this technology brings the U.S. closer to living in the kind of constantly surveilled world of George Orwell’s 1984.

Ginger McCall, who filed the initial FOIA request to obtain this DHS disclosure, said rules need to be enacted to ensure the responsible use of facial recognition software.

“This technology is always billed as antiterrorism, but then it drifts into other applications,” McCall said. “We need a real conversation about whether and how we want this technology to be used, and now is the time for that debate.”

H/T BetaBeat