Facebook is losing the fight against fake news, so it’s abandoning a feature designed to stop the spread of disinformation and replacing it with a new method.

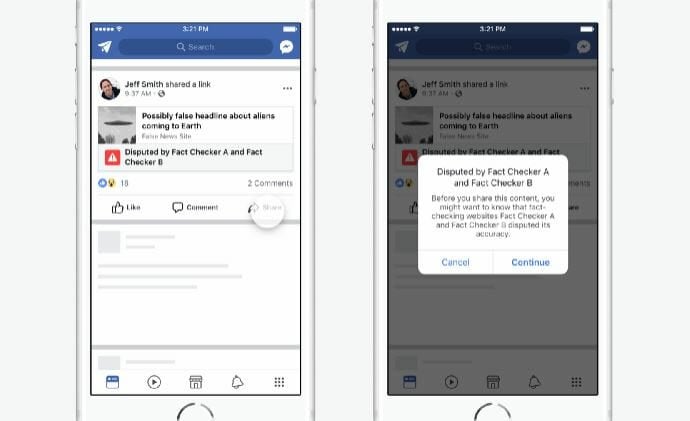

The social network explained in a blog post that it will no longer put disputed flags on potentially false news posts. Citing “academic research,” it claims placing strong images like red flags and visualizations on articles could further entrench readers in their strongly held beliefs—the opposite of what they’re designed to do.

That wasn’t the only reason to scrap the feature. While it informed readers that fact-checkers found issues with an article, it was too difficult for users to determine why exactly a post had been marked. Facebook also says the disputed flag slowed its operations because it required two fact-checkers per article and the flag was only applied to articles determined to be “false,” not “partly false,” or “unproven,” which may have mislead readers.

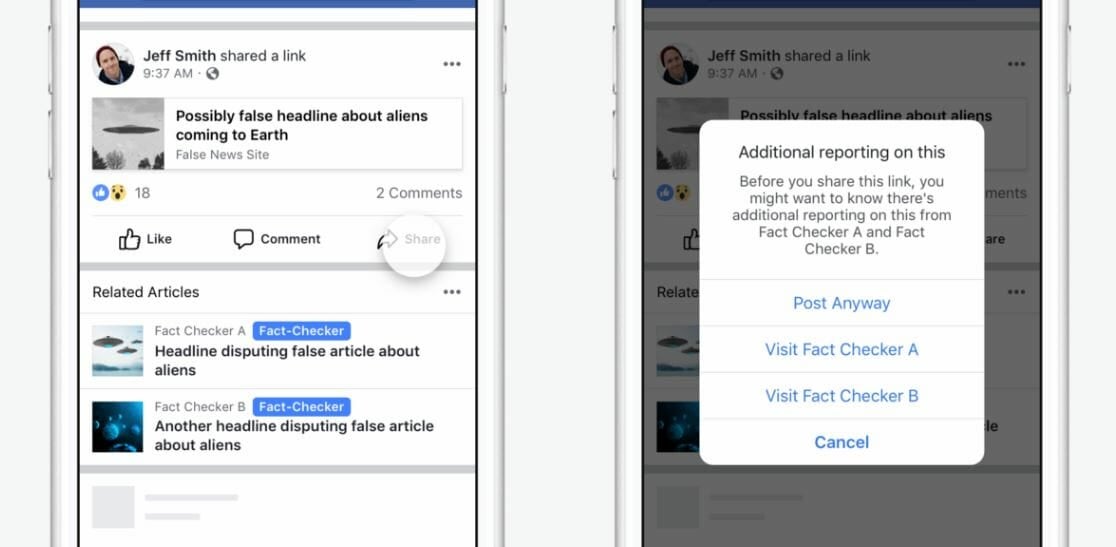

Instead, potentially false or misleading news pieces will be followed by related articles to give readers more context. Announced in August, the “related articles” feature places additional reporting under popular or disputed articles. Unfortunately, related articles don’t affect how much people click on fake news when compared to the disputed flag. However, people are less likely to share misinformation.

“During these tests, we learned that although click-through rates on the hoax article don’t meaningfully change between the two treatments, we did find that the Related Articles treatment led to fewer shares of the hoax article than the disputed flag treatment,” three Facebook researcher wrote in a Medium post.

Additionally, related news only requires one fact-checker, it works for all of Facebook’s labels, and doesn’t use strong language that could influence readers. The company says it’s also going to start research on how people determine if news is real or fake based on the sources they trust the most.

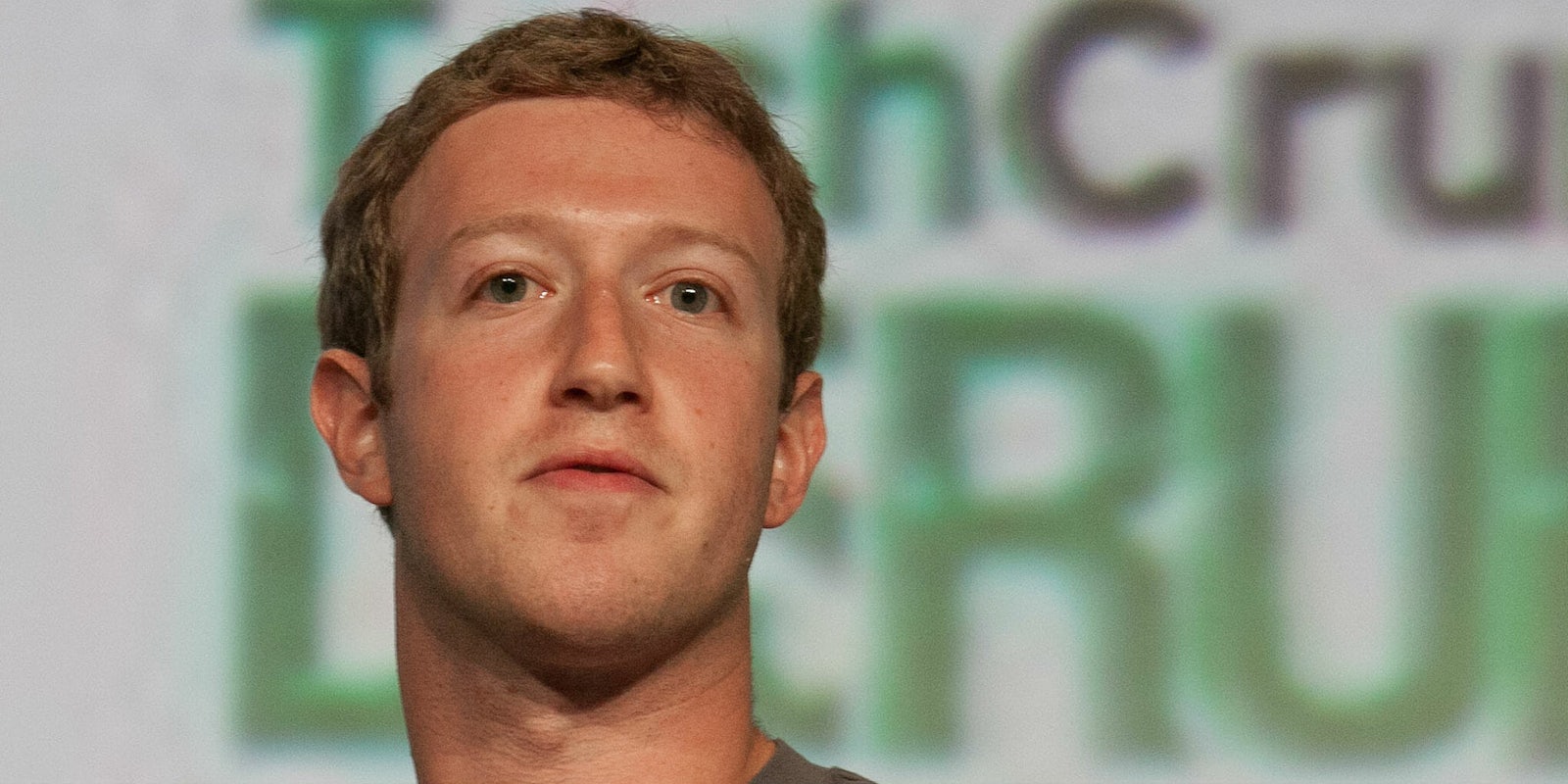

Facebook’s fight against fake news comes not long after CEO Mark Zuckerberg embarrassingly said it wasn’t an issue, “Personally, I think the idea fake news on Facebook… influenced the election in any way is a pretty crazy idea.” But after facing fierce criticism for its role in the 2016 presidential election and admitting to selling $100,000 in ads to a Russian troll farm, the social network has been left with no choice but to face the problem head-on.