One woman is furious after she said she came across a YouTube advertisement promoting AI-generated porn featuring a busty young girl in a school uniform.

In a viral post that has racked up 1.4 million views, X user Alice (@aliceinwunderl3) argued the ad promotes AI-generated child sexual abuse images and said the company’s executives should be held accountable for promoting the exploitative tech. She also provided a screenshot of the ad.

“AI generated ANY pics,” read the advertisement.

The woman said she came across the ad when she opened YouTube and reported the content as inappropriate.

“I booted YouTube and got this advert,” the post’s caption read. “I want YouTube executives to go straight to prison.”

I booted YouTube and got this advert. I want YouTube executives to go straight to prison. pic.twitter.com/cyodHl1snc

— Aliceinwunderland (@Aliceinwunderl3) January 27, 2024

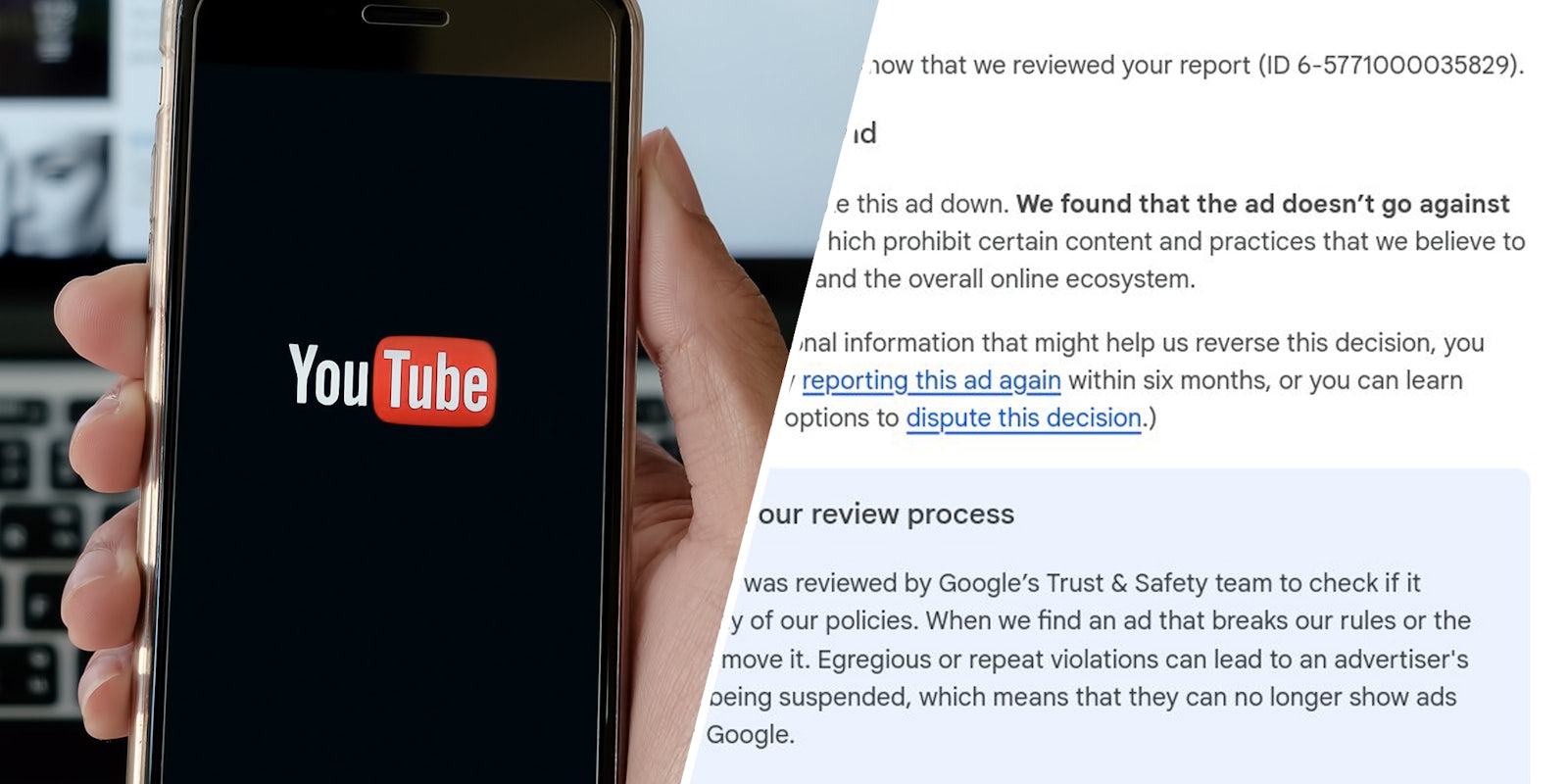

According to a screengrab provided by Alice, Google responded to the report she filed and said the advertisement does not go against the company’s rules.

“We decided not to take this ad down,” the response read. “We find that the ad doesn’t go against Google’s policies, which prohibit certain content and practices that we believe to be harmful to users and the overall online ecosystem.”

This response prompted even more outrage from the X user.

“Update: I want YouTube executives to go to a place worse than prison,” she captioned the post, which contained the screenshot of the company’s response.

The woman also continued to call out the platform for hosting the advertisement.

“@Youtube seems to be happy to advertise tools that hint/wink/nudge AI generated child porn,” she posted. “That’s what this is. ‘Mädchenfotos’ with a school girl and pornhub emphasis on ‘ANY’. This is what they’re happy to do.”

Other X users were also upset and shared their takes on the platform.

“Saw the same ad, reported it with a detailed explanation as to why this is inappropriate and unethical,” X user @_AngeTange_ responded. “Received the exact same response.”

“Interesting to learn that @Google happily supports AI generated child pornography, so long as it earns them ad revenue,” user @Kastorcaster added. “I think my state representatives will be interested in learning this.”

A TeamYouTube account with a gold badge responded and said it planned to look into the issue.

“Thx for bringing this to our attn!” it responded to the post. “We take this kind of stuff *very* seriously & will pass this on to relevant teams. Appreciate your patience while they take a closer look into this.”

The Daily Dot reached out to Alice via X direct message and Google via email for comment and more information.

Update Feb. 5, 6:38am CT: After review, the ad was removed by Google.

“We have strict ads policies that govern the types of ads and advertisers we allow on our platforms,” a Google spokesperson told the Daily Dot via email. “Under our Inappropriate Content Policy, we don’t allow ads on our platform that contain sexually explicit content. We’ve removed the ad in question and taken appropriate action against the associated account.”

Under the company’s “Inappropriate Content Policy,” ads containing sexually explicit content are not. To date, Google reported that it has “removed over 5.2 billion ads, restricted over 4.3 billion ads and suspended over 6.7 million advertiser accounts.”