Twitter recently rolled out an updated UI that, among other minor changes, replaced square avatars with round ones. Users seemed to be confused on one point about the update, though: What’s being done about the Nazis?

Indeed, Twitter is beset with actual Nazis who are neither subtle nor ashamed of their affiliations, and the company hasn’t done much to drive them off. White supremacist leader Richard Spencer, who led a “heil Trump” chant after Donald Trump won the 2016 election, was briefly suspended, but his account has since been reinstated—and now it’s verified.

Other verified right-wing accounts, like Tim “Baked Alaska” Treadstone, make anti-Semitic gas chamber jokes with no consequences:

Verified "alt-right" leader Baked Alaska (Tim Treadstone), who just held a so-called "free speech" rally, has come out as a literal Nazi pic.twitter.com/dqJyORgt8g

— Ben Norton (@BenjaminNorton) June 18, 2017

There’s also the American Nazi Party, which doesn’t have a verified account but continues to shitpost racist memes to its 12,000 followers, with no intervention from Twitter.

And that’s not to mention the dozens of smaller accounts with names like “Kill All Jews” and “Hitler Was Right.”

Twitter's Nazi problem is still out of control https://t.co/EH8VGqg6P2 pic.twitter.com/ynzq631BCG

— The Outline (@outline) June 16, 2017

In November 2016, Twitter started rolling out new anti-harassment tools that could theoretically be used to fight Nazis on the platform, including the option to report an account for “hateful conduct” and cite up to five tweets as evidence. Twitter’s “hateful conduct policy” bans tweets that target people for their race, ethnicity, or national origin, which certainly sounds like it would cover Nazism.

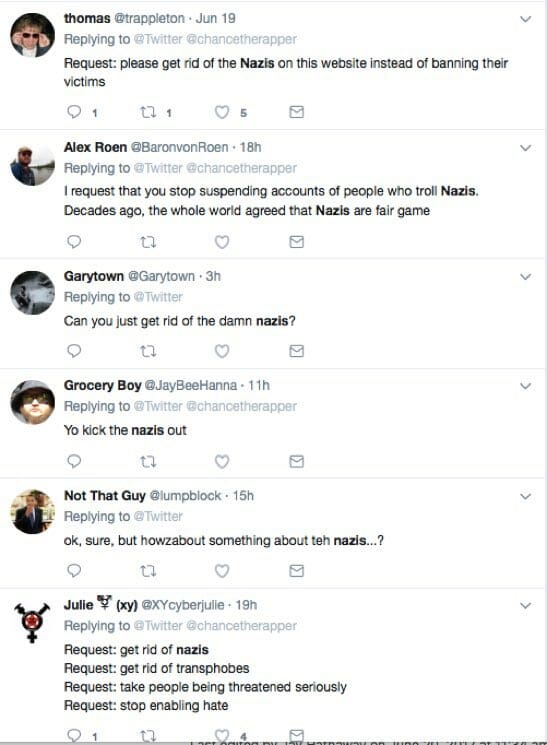

Yet it’s often the Nazis themselves who benefit from the ability to report harassment on Twitter. Users who publicly challenge Nazis, white supremacists, the alt-right, or their associates find their accounts quickly suspended, silenced by a barrage of harassment reports. Being banned for fighting with Nazis has become such a common occurrence that it’s now a meme.

The good news is Twitter is figuring out how to protect people from abuse. The bad news is the main people they want to protect are Nazis https://t.co/Ue1lw6H361

— Jeet Heer (@HeerJeet) June 18, 2017

https://twitter.com/trappleton/status/876792741056917504

Me: Can you ban this verified nazi for telling me to kill myself?

Twitter: No you said fuck once

Me: But..

Twitter: Icons are circles now

— Flea @ FWA (@Flea) June 15, 2017

https://twitter.com/ColinTDF/status/877155131388825601

https://twitter.com/swaggy_2_dope/status/877122821666725888

Literal Nazi : verified

Saying Fuck a couple times, restricted or suspended accounts. @Twitter logic.— TJ (@Wpgtjross) June 20, 2017

https://twitter.com/JustABear614/status/876942352404934656

https://twitter.com/JustABear614/status/875648535785488387

Bad news. The trove already has a verified twitter account and I got suspended for telling it to fuck off. https://t.co/FID75vCB9y

— Palle! (@Palle_Hoffstein) June 20, 2017

In many cases, people are telling the Nazis to “fuck off” or “go fuck themselves,” which makes it easy to counter-report them for profanity. That’s not always the case, though. Here’s one example of a guy who called the American Nazi Party “dumb stupid idiots whose butts smell”—no foul language involved—and ended up with a 24-hour suspension.

https://twitter.com/Type1DIABEETUS/status/876452160468398080

This is perhaps the most ridiculous example of Twitter’s backward Nazi fix, but it’s not the worst. Daniel Sieradski, an Occupy Wall Street activist and “Antifa’s most prominent Jew,” was permanently suspended for reasons that are still unclear, but he believes he was targeted by Baked Alaska and his alt-right fans.

Baked Alaska started a campaign against Sieradski, who went by @selfagency on Twitter, after Sieradski suggested Baked Alaska was a Nazi who needed to be “punched.”

https://twitter.com/bakedalaska/status/870392640759504897

In the process of the Antifa-vs.-alt-right feud, Sieradski’s personal information was exposed and his family was allegedly threatened.

@selfagency was doxxed and sent death threats. His family's lives were threatened. And @Support @twitter chooses to suspend HIM for this

— Tiocfaidh ár lá (@OwenRBroadhurst) June 8, 2017

https://twitter.com/danielburs/status/872888391049850881

https://twitter.com/addylerer/status/872884069272621056

https://twitter.com/AGreenAutumn/status/870471588457701376

His account is still offline, and a backup account he briefly used has been deleted. His public Facebook profile has also disappeared. Meanwhile, tweets threatening violence against Sieradski and his children remain online:

https://twitter.com/RectalThreat/status/870467251220078592

Sieradski did not respond to requests for comment from the Daily Dot.

Between cases like this and the people who have waited months for Twitter to take down Nazi death threats against them, it seems clear that Twitter’s current system of reporting abuse is not working.

“Get rid of the Nazis” is not a specific recommendation, though. Twitter should do something, but the solutions aren’t cheap or easy. Automated suspensions based on user reports seem to have backfired. Human moderators are expensive, and it might be impossible for them to read every report and track down every Nazi on the platform. What should be done?

One argument that’s been gaining traction recently is that Twitter already has a Nazi filter—the company just needs to turn it on.

Twitter has the capability to “withhold” certain tweets in specific countries, allowing them to comply with local laws while avoiding worldwide censorship. For legal reasons, Twitter users in Germany and France don’t see Nazi tweets and accounts.

Some have suggested that Twitter should just turn on that filter worldwide, or that a third-party autoblocker service could use the “blocked in Germany” flag to identify tweets and accounts to block.

Idea:

Since Twitter automatically blocks nazis in Germany, write an autoblocker service that runs in Germany and checks accounts— Katelyn Gadd (@antumbral) June 19, 2017

a thing I have just learned: @Twitter knows perfectly well which accounts belong to Nazis and shuts them down – see thread for examples. https://t.co/dY2eKkb1ut

— Anthony Oliveira (@meakoopa) June 19, 2017

Reminder that Twitter can identify Nazis PERFECTLY WELL, because THEY HIDE THEIR ACCOUNTS IN GERMANY AND FRANCE.

— cohost.org/adrienne | @adrienne@treehouse.systems (@adrienneleigh) June 19, 2017

For example, this is what users in Germany see when they try to visit the American Nazi Party’s Twitter page:

I’m not even seeing the avatar here. pic.twitter.com/1fhakQDYSB

— Alexander Repty (@arepty) June 19, 2017

This approach might not work as a catch-all, though: German and French laws could be too broad, or not broad enough, and they might miss important context. Those countries’ bans on Nazism don’t necessarily parse the many shades of neo-Nazism being expressed in America right now. Plenty of unabashed white supremacists, anti-black and anti-Muslim groups, and people who want to turn America into a “white ethnostate” also explicitly claim they’re “anti-Nazi.”

https://twitter.com/MechMK1/status/876974318911139840

Even banning outspoken Nazis is more complicated that it looks. Expanding the filter would effectively apply French or German law to users in other countries, which opens Twitter up to criticism for impeding free speech. Twitter is a private company that doesn’t owe anyone a platform, but in some ways, it’s in the same predicament as its users when it comes to Hitler fanboys. It can stand up to Nazis, but the Nazis are loud and at least somewhat organized, which means they can make challenging them very annoying.

Twitter has said it believes that “the open and free exchange of information has a positive global impact, and that the Tweets must continue to flow,” which makes sense when the flow of the tweets is crucial to your bottom line.

The most cynical interpretation of the situation is that it’s not worth Twitter’s time to solve the Nazi problem because Nazis drive controversy, which drives engagement. People might be using Twitter to complain about how infested Twitter is with white supremacists, but at least they’re using Twitter!

https://twitter.com/OmanReagan/status/877045463471472640

Twitter declined to comment to the Daily Dot on the viability of using “withheld in Germany” as the basis for some kind of Nazi filter. The company pointed instead to its hateful conduct policy and noted an increase, since the November 2016 update, in the percentage of hate speech reports that are reviewed in a timely manner.

“Twitter … sped up its dealing with notifications, reviewing 39 percent of them in less than 24 hours, as opposed to 23.5 percent in December,” the company reminded us.

Twitter did not comment on the widespread sentiment floating around its site now that those reports benefit white supremacists more than their targets.