The president is tweeting again, on his phone and moving so fast that he sometimes seems to forego proofreading. Seconds later, he’s reveling in interactions from the thousands of supporters and detractors who hasten to comment and retweet, amplifying his message far beyond the 65 million who follow him. Pleased, he retweets his favorite morsels of flattery and support, even that one about sharks.

But those compliments aren’t all coming from real people.

They may look like people, and some even tweet like people, but they are decidedly not all people. Many—no one knows precisely how many—are Twitter bots, or bits of software designed to replicate the activity of real people. Every time Trump single-finger jabs a message to the world, bots automatically retweet, comment, and like it.

Last week, the New York Times’ cybersecurity reporter Nicole Perlroth tweeted that there is “widespread consensus among information security professionals that Trump’s twitter account is being buoyed by bots and @Twitter is doing nothing to address it.” In May 2017, the Daily Dot found that 900,000 fake accounts were following the president.

Bots have come a long way in just a few years. Last month, University of Southern California researchers published findings that, using the Botometer algorithm, determined that of the nearly 245,000 accounts that participated in the Twitter political dialogue in the 2016 election and 2018 midterms, approximately 31,000, or nearly 13%, were bots. Analyzing the bots, they found evidence of improved sophistication at mimicking human behavior from 2016 to 2018.

In 2016, bots were mostly just timed retweeters; in 2018, they retweeted less and interacted more, often posing polls and questions.

In addition to software-controlled bots programmed to mindlessly retweet or create polls, there is an unknown number of “trollbots” that clog Trump’s comment section. While most of these accounts are probably controlled by humans, they exhibit behavior that couples the repetitive nature of a bot with the abusive conduct of a troll, hence the name. The term was coined by creators of the BotSentinel algorithm, which identifies trollbots based on activities that break Twitter’s rules.

“We searched for accounts that were repeatedly violating Twitter rules and we trained our model to identify accounts similar to the accounts we identified as ‘trollbots,’” the website states. The tool does not factor in politics, religion, ideology, location, or frequency of tweets to determine a rating of 0-100% trollbot (75% and over are classified as trollbots).

Yet it has identified far more trollbots in the MAGA set than from the left, which BotSentinel says is because there are more liberals using its tool. Indeed, based on the Daily Dot’s analysis of hundreds of accounts, there are far more MAGA trollbots in Trump’s timeline—and they’re making a mess.

Trollbots at work

Using the BotSentinel to analyze accounts that responded to Trump’s Sept. 27 tweet, “I AM DRAINING THE SWAMP!” the Daily Dot found that nine of the first 10 pro-Trump responders were flagged as alarming or problematic, meaning they exhibit “activity and patterns” that respectively mimic or are similar to a trollbot account. Of the first 10 anti-Trump or resistance responses, four were classified as such.

That statistic—a majority trollbot pro-Trump responders; minority trollbot anti-Trump responders—bore out across several of Trump’s tweets. It also applied to hashtags that are likely to attract trollbots such as #Resistance and #TrumpLandslideVictory. The Daily Dot excluded ambiguous tweets from the data set.

Trollbots share many characteristics. Analyzing hundreds of accounts flagged as such, the Daily Dot determined that the typical trollbot often has a handle that ends in a string of numbers; tweets and/or likes an inordinately large number of times per day; has either a patriotic, Trump-featuring, or no header image; and an eerie focus on a single subject or two related subjects, such as Trump and gun control. Trollbots’ profile descriptors tend to include words and hastags like patriot, MAGA, KAG, resistance, resist, America, American, QAnon, Christian, and, oddly, sports enthusiasm.

Trollbots’ followers can range from none to hundreds of thousands. One pro-Trump account, @chatbyCC, that has more than 300,000 followers received a trollbot rating of 87% on multiple occasions on different days. The account appears to retweet every single Trump tweet, yet the Botometer doesn’t identify this account as an actual bot. According to its data, however, from Sept. 24-30, the account tweeted 4,000 times, or an average of 571 times a day, for a rate of one tweet every two-and-a-half minutes without subtracting time for sleep. Since its Aug. 2012 launch, the account has tweeted more than 400,000 times. (Contrast that with prolific tweeter Donald Trump’s 45,000 tweets since March 2009, not including deleted tweets, of course.)

The @real_defender account has tweeted far less—just 6,000 times since June 2009—but maintains a trollbot rating of 94%. The nearly 34,000 who follow this account, which claims to have been “proudly retweeted by POTUS,” are treated to dozens upon dozens of tweets per day, nearly all responses to Trump’s tweets with the rare tweet to Rep. Alexandria Ocasio-Cortez (D-N.Y.) and an actual tweet of its own peppered in.

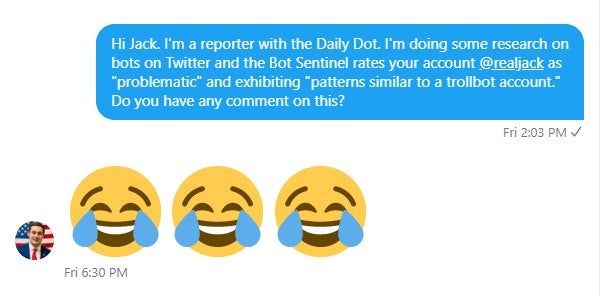

Among trollbots you’ll find accounts that are definitely associated with real people, such as far-right pundit Charlie Kirk (67% trollbot rating on multiple occasions) and former presidential adviser Sebastian Gorka (61% on multiple occasions), both of whom have verified accounts, and 22-year-old right-wing pundit Jack Murphy (@RealJack). On two separate occasions, the BotSentinel rated Murphy’s account as problematic. Asked about this via Twitter message, Murphy was amused.

On Sept. 27, Trump himself received a 100% trollbot rating; on Sept. 30 and Oct. 2, he was rated as exhibiting “moderate tweet activity,” meaning he is not a trollbot. Many among the pro-Trump set receive moderate trollbot ratings (of 25-49%): Eric Trump, Donald Trump Jr., Candace Owens, Diamond and Silk, and Rudy Guiliani.

Contrast these ratings with those of prominent accounts that often tweet disparagingly of Trump, like Tony Posnanski, Molly Jong-Fast, Alyssa Milano, and Bette Midler; all are rated normal (0-24% trollbot), or as not exhibiting trollbot behavior. Michael Avenatti received a moderate trollbot rating.

So why do prominent, outspoken Trump supporters seem to get higher trollbot ratings than prominent, outspoken Trump detractors? In spite of what far-right trolls like Laura Loomer may want to believe, this is not evidence of an anti-conservative Bot Sentinel or Twitter bias. It is purely due to the activity of these accounts.

Under Twitter’s rules, which were updated last month, users are prohibited from using the platform “in a manner intended to artificially amplify or suppress information or engage in behavior that manipulates or disrupts people’s experience on Twitter.” Intentionally spreading lies and conspiracy theories fall into this category.

Based on these and other rules, Bot Sentinel classifies trollbots as accounts controlled by humans that “exhibit toxic, troll-like behavior.” They may “target and harass” specific accounts as solo acts or as part of coordinated campaigns. “Some of these accounts frequently retweet known propaganda and fake news accounts, and they engage in repetitive bot-like activity,” it states. Some may actually be bots; some may be people who work alone or in a network, including as part of a foreign influence campaign, to impact discourse, public opinion, and elections.

When an account parrots Trump’s false claim about changes to the rules governing whistleblowers, or links to a story by a known purveyor of fake news and conspiracy theories, or simply tweets nonstop about or at a single subject, it becomes more likely to be identified as a trollbot. Working in concert, either accidentally or intentionally, with actual bots that spread propaganda and misinformation across Twitter, these accounts obfuscate the truth and make it increasingly difficult for many to tell the difference between fact and fiction. Therein lies the problem.

The question that many, including the Times’ Perlroth and other influential people, have for Twitter, is why it isn’t doing more to fight the war of lies versus truth waging on its platform? (A representative from Twitter did not respond to the Dot’s questions for this article.)

As the 2020 election cycle looms, it becomes increasingly important that all social media companies do their part to promote truth and stifle lies on their platforms. Last year, Poynter’s Daniel Funke wrote that while Twitter has made some strides in this area, it has at best done the “bare minimum” while keeping its head down and trying to avoid notice. Meanwhile, he wrote, its competitors are cleaning up their acts.

READ MORE: