This week Facebook gave us a new way to learn about the stories populating our News Feeds. While the update itself is minimal, there is one feature of particular interest: a new tool that lets you see which of your friends have shared a particular story. With this capability, you can find out which of your friends are most guilty of sharing false news on Facebook.

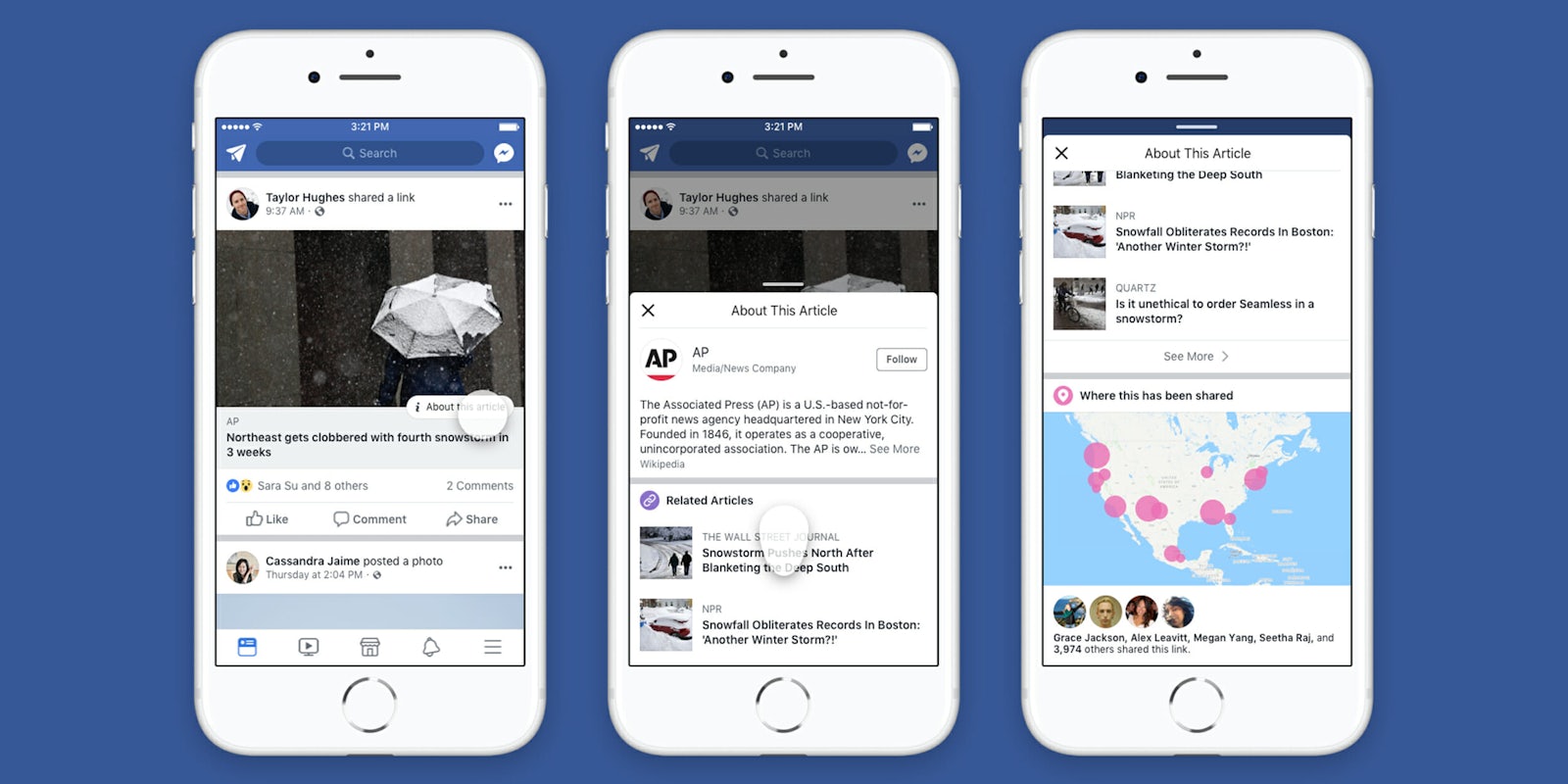

To access this feature, scroll through your feed and find a post that includes a link to a news story. Tap the “i” situated in the upper right of the headline field. There, you can find information about the article, including its publisher, related articles, and a heat map of where it’s been shared most frequently. This menu also includes a list of your friends who have shared the piece.

When you see a particularly questionable piece being shared in your feed, you can click to see who else has shared it. Eventually, you may learn who tends to be the biggest offenders when it comes to sharing false news and incendiary left- or right-leaning propaganda. (Alternatively, this tool may reaffirm existing observations or suspicions.)

At this level though, Facebook’s tool is not so much useful as it is a novelty. Facebook interventions have never historically been a good way to change someone’s behaviors. Reaching out to a friend to say “Hey, did you know you’ve repeatedly been sharing false news?” isn’t likely to go over particularly well. Studies have shown that fake news spreads faster than real news, and it’s because fake news on Facebook is often more interesting than the real thing. It also tends to appeal to our own personal biases or emotions.

While Facebook is still in hot water over its involvement in the Cambridge Analytica privacy scandal—which now may affect upwards of 87 million mostly U.S. residents—this could be a situation where some active data aggregation and intervention could help prevent people from sharing false news in the future. Perhaps instead of those friend anniversaries and other reminders at the top of your News Feed, Facebook could use its data on who is sharing false news and where—which it’s evidently gathering—to give a weekly PSA of fake stories making the rounds in your areas. Perhaps when a particular user shares a certain number of proven-false stories, it could also give the user a notification message along the lines of “You’ve shared 3 stories this week that contain false or misleading information. Here are some suggestions for identifying fake news.”

Facebook CEO Mark Zuckerberg is set to testify before Congress next week.

H/T Mashable