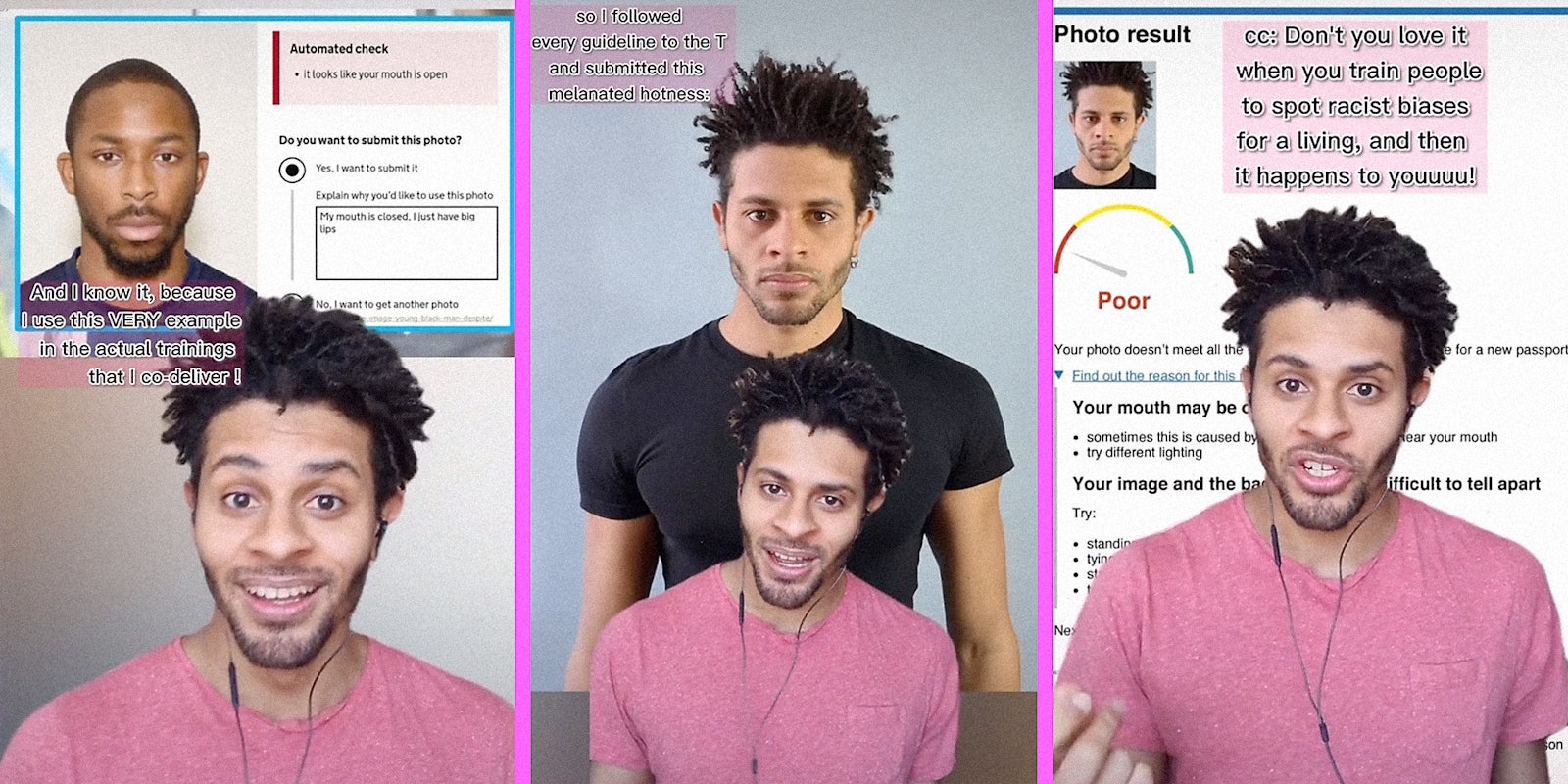

TikToker Joris Lechêne, whose videos unpack institutional prejudice, says his passport photographs were rejected by Her Majesty’s Passport Office in the U.K. because its facial recognition technology failed to identify his features.

Lechêne says that the algorithm deemed his photographs unacceptable because his mouth “may be open” and his “image and the background are too difficult to tell apart.”

But Lechêne, who is Black, shared the photos he took in the passport office. The images show him with a closed mouth and standing in front of a pale gray background that contrasts with his skin color.

The automated program suggests tying back or flattening hair, but in the photo, Lechêne has a shaved head.

Lechêne says that algorithms also tend to interpret photographs of Black people’s mouths as open when they’re closed. He shared a screenshot of the passport algorithm providing the same feedback to an image of another Black man whose mouth is closed and who has a shaved head.

Lechêne explained that outside of TikTok, he delivers training on racial biases, and he used this image as one of his examples.

In the video, Lechêne argues that not only is the British Passport Office’s algorithm flawed, but automation can often make things worse. Programs all over the world, including ones designed for use by police forces in France, Australia, and the U.S., have been proven to display racial and other biases.

A piece of software can only be as unbiased as its creators, and even people who believe themselves and aspire to be anti-racist are often still influenced by passive or hidden racism.

Facial recognition software struggles with Black features because images of Black people aren’t used in sufficient quantities to enable the algorithm to recognize them. Treating Black features as afterthought results in software that at best inconveniences Black people and at worst causes them serious harm.

In another video, Lechêne says that if we want artificial intelligence to operate without replicating society’s harmful biases, we have to first eradicate those biases from society.

“This is just a reminder that if you think artificial intelligence can help build a society without biases, you are terribly mistaken,” Lechêne says. “Robots are just as racist as our society is.”

Lechêne and Her Majesty’s Passport Office did not immediately respond to the Daily Dot’s request for comment.

Today’s top stories

| ‘Fill her up’: Bartender gives woman a glass of water when the man she’s with orders tequila shot |

| ‘I don’t think my store has even sold one’: Whataburger employees take picture with first customer who bought a burger box |

| ‘It was a template used by anyone in the company’: Travel agent’s ‘condescending’ out-of-office email reply sparks debate |

| Sign up to receive the Daily Dot’s Internet Insider newsletter for urgent news from the frontline of online. |