If you ask a computer to describe a piece of abstract art, it might tell you a priceless print looks like a toilet.

To better understand how algorithms interpret art that even humans might not fully understand the meaning of, artist and researcher Matthew Plummer-Fernandez started a blog called “Novice Art Blogger,” in which a computer experiences art for the first time.

His code randomly selects an abstract piece of artwork from the Tate online archive and then sends the picture to an image-classification algorithm. The deep learning algorithm was created by researchers at the University of Toronto, and they’ve made their work available publicly for anyone to use.

The algorithm looks at images and tries to translate them into text captions based on what it sees. To teach the software to recognize different shapes, actions, or colors, researchers provide pre-captioned data with descriptions of each image, and the computer compares the new images to the ones already in its system. Plummer-Fernandez’s code spits out the photo plus the generated caption, along with a description of the “nearest neighbor,” or a description photo the abstract art most resembles.

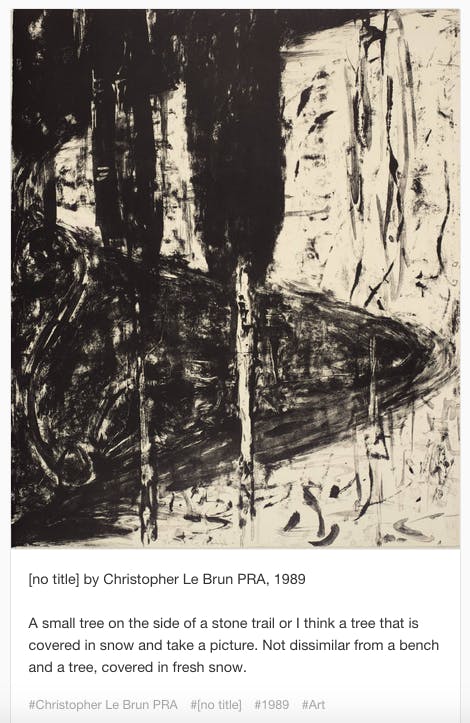

The automated process runs on a Raspberry Pi, and the end result is something like this:

Plummer-Fernandez is no stranger to researching how machine learning collides with popular culture. His blog Algopop looks at where and how algorithms appear in everyday life. Examining art through computer-generated descriptions, though, can give us a different view of the fluid interpretations of art from the view of a logical machine.

“I was curious about its interpretation of abstract art as such art is very subjective and open to interpretation, it invites interpretation rather than dictate it,” Plummer-Fernandez said in an email interview with the Daily Dot. “There is no right or wrong way to experience such art, so an algorithm’s understanding of it would be equally valid. Also I was imagining that such a system would provide a more innocent and honest reading of art, without being burdened by art history, peer reviews, and collective consensus.”

Machine learning algorithms have uncovered details in art that even the most experienced eye can’t.

The computer is very limited in what it can compare, however. Because it’s constantly getting fed new information and data to weigh against incoming queries, many of the descriptions of the images are similar—like references to scissors and toilets, Plummer-Fernandez said.

There’s still a long way to go before software can accurately reflect what’s seen in any image, from art to photographs. The University of Toronto’s software is also used for an automated Twitter feed that pulls images from Reuters and attempts to describe them. Its limited comparison knowledge is pretty clear based on largely inaccurate descriptions. A paper published by the university explains, in much detail, how machines can understand and describe images, and different programmatic challenges to algorithm-based identification.

It’s unlikely an human would ever describe an image the way the code does, and art historians and admirers may scoff at the idea of a computer truly understanding or appreciating great art. But machine learning algorithms have uncovered details in art that even the most experienced eye can’t.

At Rutgers University in New Jersey, one team of computer scientists discovered that by using an algorithm to compare a variety of paintings, artists, and styles, researchers can figure out who and what influences particular artists and paintings. Some results were already well-known in the artistic community, but other similarities had remained undiscovered for decades.

When I scrolled through “Novice Art Blogger,” I was struck by the apparent commentary on technology and the human experience. Art is abstract, it’s not necessarily logical and something to be boxed up and described by algorithms. Can we ever get to the point where computers understand and explain something that humans can’t?

For now, this remains an experiment.

“I see it as an art project,” he said. “It’s both entertaining and invites discussion about art, technology, and online culture. Second of all, I see it as a research tool, it reveals the nuances and quirks of Machine Learning—we can learn a lot by listening to what it says about art.”

Photo by Tomás Fano/Flickr (CC BY-SA 2.0)