Google‘s Advanced Technology and Projects lives in the future. Regina Dugan’s band of pirates just returns to our time every now and then to show us what they’ve brought back. At this year’s Google I/O, the team had plenty to show off, from updates to existing projects to all-new ventures that would be hard to believe if you saw them in science fiction. But Google ATAP had them front and center, demoing their latest projects in real time.

From mapping hand movements with radar to turning jeans into smartphone controls, here’s everything Google brought back in its DeLorean for us people of the present to awe at.

Project Soli

Leading up to the unveiling of Project Soli, Dugan spoke about how viable small screens are. The conclusion was, essentially, we won’t be able to interact with screens if they get any smaller than watch size. For us to continue to interact with small displays, we’ll have to move beyond the touch screen.

The best way to think of Project Soli is to imagine the gesture controls of a touchscreen but without the actual touch. You still make the motion to press a button or turn a dial or swipe, but you do it anywhere and the action happens on the screen. It’s “all physical controls replaced by your hand,” according to Ivan Poupyrev, ATAP Technical Project Lead.

Gesture controls aren’t new, of course. The Kinect for the Xbox is perhaps the most prominent example, but we’ve seen this kind of thing promised for a wide variety of platforms, from desktop computers to smartphones.

The difference is most of those systems require cameras or a similar capacitive sensing technology to capture range of movement. Project Soli uses radar. And the radar chip is so small, it can fit inside a smartwatch.

The radar chip can map velocity, distance, and uses a range doppler. It’s capable of mapping the motions and gestures of a hand and turning that information into an on-screen action.

Throughout the course of the demo, Poupyrev changed the speed of his gesturing to show the radar’s capacity for recognizing velocity. He also showed off its ability to perform “multiple modalities” by changing the time on the clock. He changed the hour, then moved slightly to the right and performed the same gesture to change the minute, then back to hour. The radar picked up on it all.

Project Soli will be available for developers to get their hands on later this year.

Project Jacquard

Project Soli set out to fix the issue of bandwidth when interacting with screens. Project Jacquard aims to address surface area. It also appears to serve to redefine the term “wearable” by turning clothes into touchscreens. Your pants could soon be where you do your scrolling.

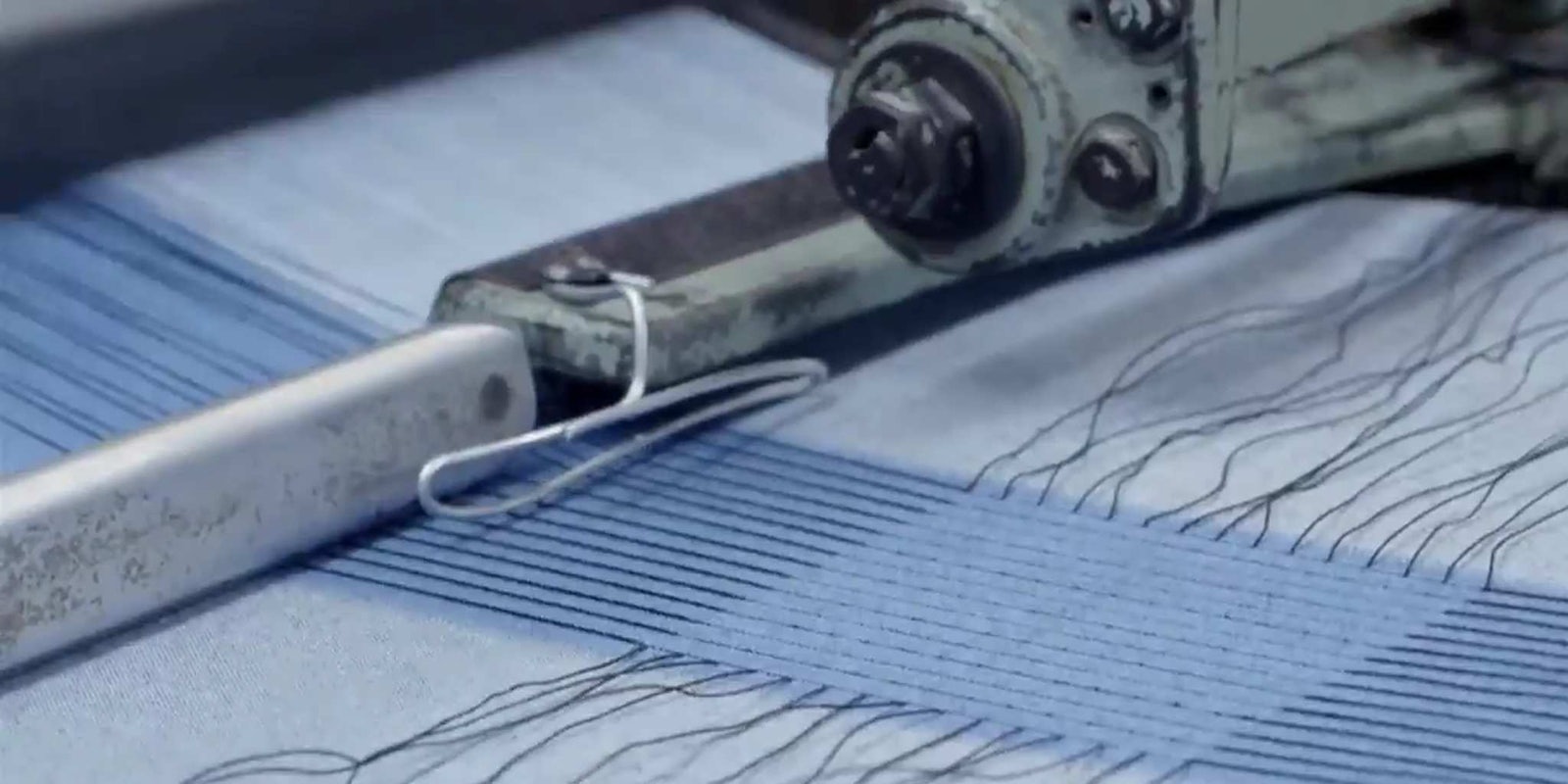

Project Jacquard makes it possible to weave multitouch sensors directly into clothing. Google has been working with industry leaders to create conductive yarns that will work with existing factories so they won’t have to overhaul their infrastructure for Google’s crazy clothing experiment.

Current conductive yarn comes in one color, so the ATAP team had to create conductive yarns in a wide variety of shades while also making it “hundreds of times more conductive,” all while maintaining a look and feel of normal yarn. If held under a microscope, the wires are visible. But to the naked eye it looks like your average piece of thread.

The thread can be weaved into just about anything—though the ATAP team learned the hard way when it tried to make an entire 20-meter textile interactive, only to learn it requires too much wiring to connect it to anything.

Instead, Google opted to include strategic patches that would provide interactiveness. The patches are capable of sensing an entire hand or fingers and recognize multitouch, waves, scrolling, and other gestures. In a later demo, a coat made with an interactive patch in the sleeve is shown. The wearer swipes on the patch and the gesture accepts a call on his phone.

Google’s first partner in implementing Project Jacquard will be Levi’s. Company Vice President Paul Dillinger came onstage to tell developers “you are all fashion designers along with us.”

Project Abacus

“Authentication sucks. Passwords suck.” Those were Dugan’s words onstage as she began talking about Project Abacus. Fingerprint identification is a first step, but ATAP sees security as more than that. Most passwords and security measures are a single-step process: You perform the task and it lets you in. ATAP wants to create “a continuum of trust.”

ATAP enlisted universities around the U.S. to help study the problem with passwords, and confirmed the hypothesis that a combination of security factors could create new security models—and could be implemented with just a software update.

The multi-factor security process takes into account a variety of standard phone operations that could be used to identify a person: how you swipe, type, move, and talk. All of this combines to create a score that fluctuates throughout the day and grants different levels of access to parts of the phone. A low score is fine if you want to browse the Web or play games, but it’s not going to let you in to bank accounts or Google Wallet. Those stay locked up until the security measures are sure the phone is in your possession.

Project Vault

Google ATAP had some more password-killing mojo left after Project Abacus, which led to Project Vault. A “hardware and software isolated environment” crammed onto a microSD card, Project Vault is basically a tiny computer designed to keep all of your valuable information safe. If your screen is like a window on your house the outside world can look in, Project Vault is the safe you keep stored in the basement where no one can see.

Built with an ARM processor, NFC, and antenna, Project Vault offers a suite of cryptographic services and 4GB of isolated storage. It enables Vault users to be able to communicate with each other without the phone reading the data, because the phone sees Project Vault as just a storage device.

Vault is a long way from seeing the light of day—”It’s still very much in the experimental stage,” Peiter Zatko said—but the source code for the development kit has been made available already.

Project Ara

Before closing out the keynote, ATAP gave an update to Project Ara, the modular smartphone Google announced last year. The Frankenstein device, which is made up of parts designed to be replaced and switched out at will, came to life onstage.

After slotting all the parts and sliding them into place, Google engineer Rafa Camargo powered up the device and showed it in action, marking the first time a Project Ara phone had been built and booted up onstage.

With the device powered on—running Android 5.0—Camargo slotted in a camera module on the fly and took a picture with it without rebooting the device. No additional details were shared about the device, but seeing it in action should suffice those wondering about the progress of Project Ara.

Screengrab via Google Developers/YouTube