Recently, while scrolling through my Facebook News Feed, I was alarmed to find people sharing a video of an animal being tortured and killed.

The footage shows a man dragging a cat on a leash, kicking it, and tossing it in front of a snarling pit bull that’s chained to a wall. The cat tries several times to escape, and each time the man, laughing, shoves him closer and closer until the dog is finally able to grab him. The cat does not survive.

Here’s an early screengrab, taken before the clip becomes graphic:

A Colombian user posted the video and attached a description in Spanish:

LA RAZA MAS PELIGROSA “LA HUMANA” ! La mayoria de gente es ignorante por no saber utilizar bien una mascota no importa la raza que sea , son solo para darles amor y no para ponerlos a peliar por diversion , decimos que la culpa la tienen los animales por ser asi , sabiendo que ellos solo actuan asi por defensa , los mismos dueños son los culpables por inculcarles esa conducta.

Which roughly translates to:

The most dangerous animal is man. Most people are ignorant about how to treat a pet. No matter what kind, pets are just to give you love, not to fight for sport. We say animals are to blame, knowing they only act in defense. The same owners are to blame for instilling that [violent] behavior.

The description reads like an anti-animal-cruelty PSA, so the poster’s heart may have been in the right place. But even then, it’s hard not to cringe at the attached video and wonder how it had been permitted to spread on Facebook as much as it had. (At the time I viewed it, it had already been shared thousands of times.) Still, I reported the video for graphic violence. I had nothing against the user who had posted it, but my gut instinct was that videos of tortured animals don’t belong on Facebook—or, for that matter, anywhere.

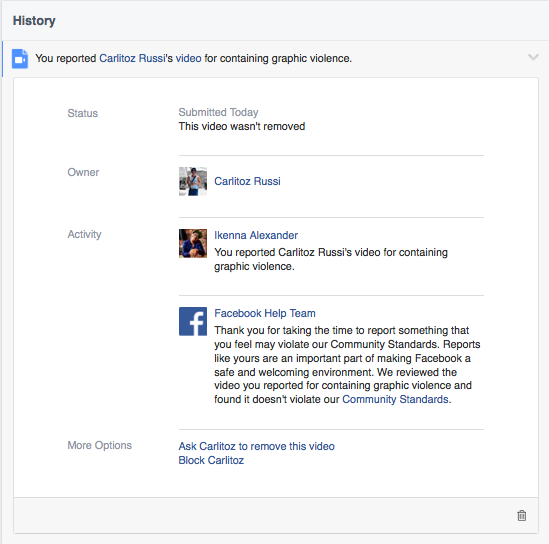

Within an hour of reporting the video, I received a surprising response from the Facebook Help Team.

“We reviewed the video you reported for containing graphic violence and found it doesn’t violate our Community Standards,” the team said.

Reading the Help Team’s response, I couldn’t help but wonder how the video could do anything but violate the service’s community standards. Here’s what Facebook has to say about graphic content:

Facebook has long been a place where people turn to share their experiences and raise awareness about issues important to them. Sometimes, those experiences and issues involve graphic content that is of public interest or concern, such as human rights abuses or acts of terrorism. In many instances, when people share this type of content, it is to condemn it. However, graphic images shared for sadistic effect or to celebrate or glorify violence have no place on our site.

When people share any content, we expect that they will share in a responsible manner. That includes choosing carefully the audience for the content. For graphic videos, people should warn their audience about the nature of the content in the video so that their audience can make an informed choice about whether to watch it.

Reading that, it’s apparent that videos like this one may be acceptable under the site’s standards as long as the poster includes a warning to viewers. Needless to say, the person who shared the video of a cat getting mauled to death did not provide a warning.

It raises the question of whether, and how, Facebook needs to better police its content—perhaps by including an age requirement to view certain posts, or an auto-generated landing page with a warning before certain content becomes visible. It also calls into question how effective is a post like the one above at raising social awareness. Are thousands of people sharing the video out of outrage or for a grotesque thrill? It’s unclear.

This isn’t the first time a video has been posted to Facebook depicting animal cruelty, and it’s not the first time the social media giant has dragged its feet on removing that content. In fact, except for the case of breastfeeding photos, the social network has a history of slow responses in addressing hate speech and offensive content.

Two days after the initial report and response, Facebook sent me another message, this time stating that the Help Team had not reviewed the video because the user had deleted it before they had the opportunity to do so:

This contradicted the previous message, which said Facebook had reviewed the video and found it did not violate the site’s rules. It’s curious, considering that the video was available and shared online for two days after Facebook initially stated it wasn’t in violation.

So which is it, Facebook? Did the team review the video content at all, and if not, why did it reply to reports and claim that it did?

The problem isn’t Facebook’s community standards—it’s the social network’s failure to enforce its own rules.

Photo via Thierry Ehrmann/Flickr (CC BY 2.0) | Remix by Fernando Alfonso III