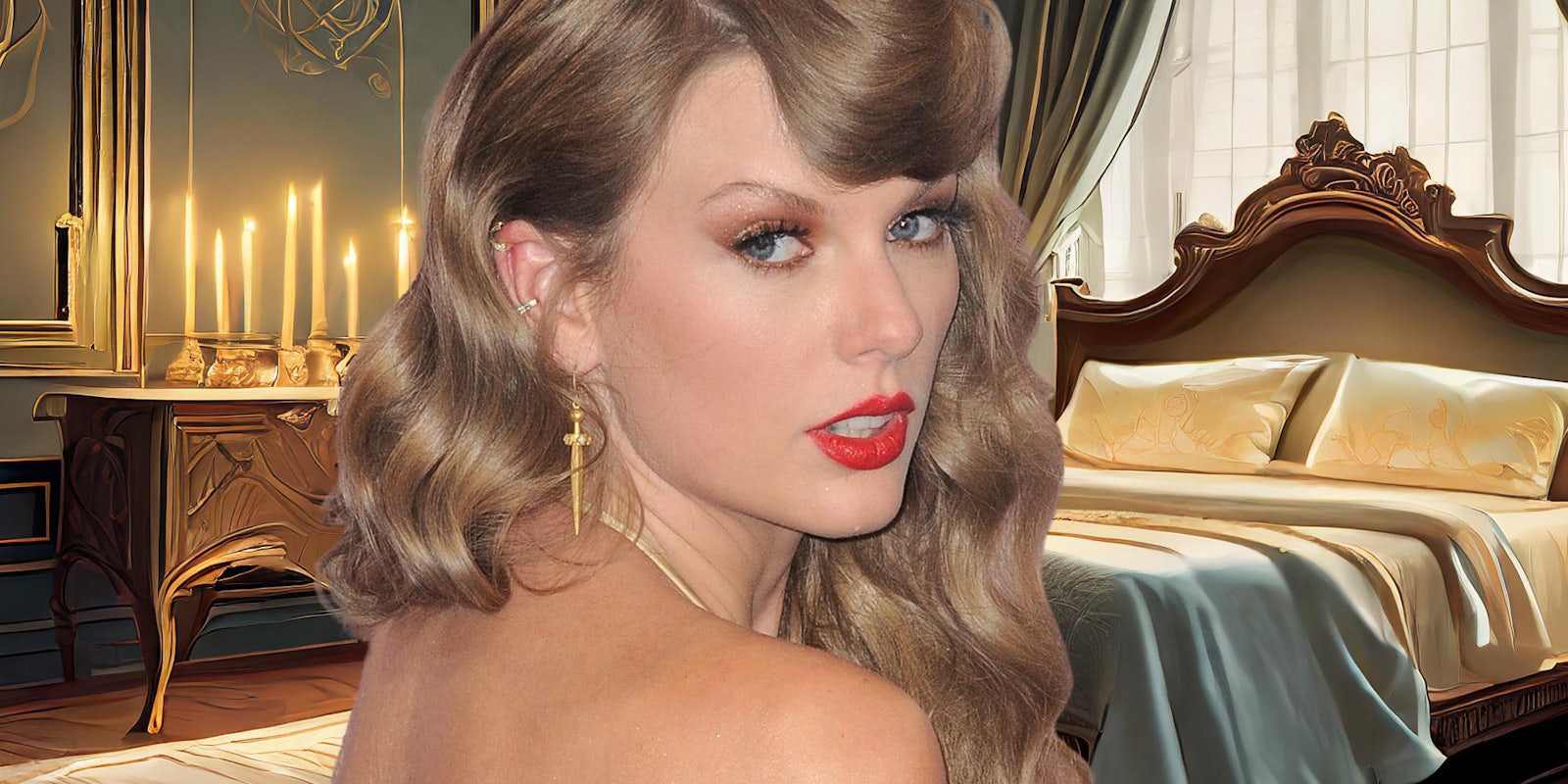

X allowed explicit AI-generated images of pop star Taylor Swift to rack up tens of millions of views.

The images, which include depictions of Swift being sexually assaulted by Kansas City Chiefs fans, went viral on Thursday after being posted by X user @Real_Nafu.

The lewd images immediately sparked backlash among Swift’s fans, who organized a mass-reporting campaign to get the post removed.

“the taylor swift ai images are insane. actually terrifying that they exist,” one fan wrote. “please report + don’t give more attention to those tweets. some of these men really need to be locked in a cage and shipped off to mars or smth.”

The effort among Swift’s fans ultimately paid off, resulting in the suspension of the account. The removal, however, only came after the user’s post racked up more than 22 million views. The post had also been bookmarked by users on X more than 71,000 times.

Given the increasingly realistic capabilities of AI, many users compared the images’ spread to “The Fappening,” the massive leak of explicit celebrity photographs in 2014.

“22M views 154k likes on some AI porn of a real life person like some 2024 equivalent of thefappening,” one user wrote. “This site is so unbelievably cooked if this kind of sick shit can just stay up.”

Yet despite the initial removal, X seems to be doing little to delete other posts containing the imagery.

The images have remained visible on some accounts for upwards of six hours, and continue to garner tens of thousands of views. Such posts appear to be in violation of X’s policy regarding synthetic and manipulated media.

On the site, “Taylor Swift AI” trended, which included links to the images.

The Daily Dot attempted to reach X over email but was met with an automated response: “Busy now, please check back later.”

Synthetic media expert Sam Gregory says the issue, whether AI-generated images or deepfake videos, highlights the ever-growing problem related to AI-generated non-consensual images of a sexual nature.

“Taylor Swift is being targeted now, but many prominent women celebrities and many private citizens, including recently multiple cases with teenage girls from New Jersey to Brazil to Spain, have been targeted with deepfakes,” Gregory told the Daily Dot. “It is not only extremely easy with advances in generative AI to generate nude images or ‘nudify’ an individual using available tools or open-source options. But it’s also a huge problem that it’s very easy to find these images.”

While legislation has been introduced to address the issue, victims of AI-generated non-consensual content have little to no recourse.