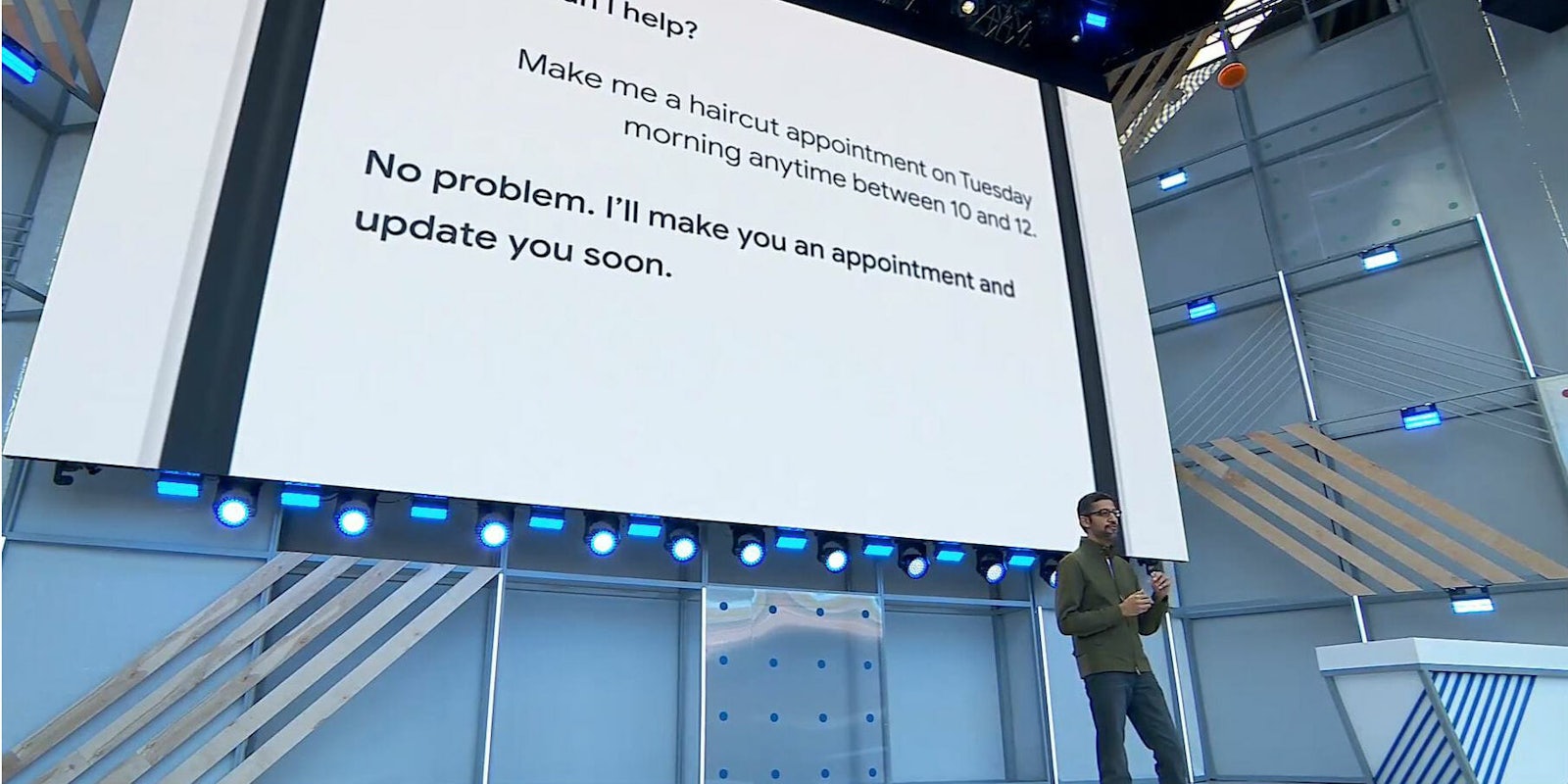

The audio clip Google demonstrated during its I/O conference keynote sounded like an ordinary phone call of a woman setting up a haircut appointment with a salon employee. But there was something peculiar about the exchange that audience members probably wouldn’t have noticed if Google CEO Sundar Pichai hadn’t prefaced the feature he was showing off. Incredibly, one of the women on the call was actually an enhanced version of Google’s AI Assistant.

The fascinating demonstration at the company’s developer conference illustrated how the Assistant can make a call on behalf of a user to help them book an appointment. It does this using a frighteningly convincing voice, realistic enough so people on the other end have no idea who (or what) they are speaking to.

Pichai played backed audio clips from two tests Google conducted using the in-development feature it’s calling Google Duplex. In the first, the voice assistant calls a salon to book a haircut appointment. Not only is the tone and pitch of the voice surprisingly lifelike, but its ability to adjust its responses on the fly to create a fluid, intelligible conversation walks a fine line between fascinating and terrifying.

Here is a sample of the conversation.

Duplex AI: “Hi. I’m calling to book a women’s haircut for a client. Um, I’m looking for something on May 3rd.”

Salon: “Sure, give me one second.”

Duplex AI: “Mhm”

Salon: “Sure, what time are you looking for, around?”

Duplex AI: “At 12pm.

Salon: “We do not have a 12pm available, the closest we have to that is a 1:15”

Duplex AI: Do you have anything between 10pm and uhh 12pm?

Salon: “It depends on what service she would like. What service is she looking for?”

Duplex AI: “Just a women’s haircut for now.”

The AI goes on to book the appointment and send a calendar event as confirmation to the user who requested it.

In the second example, Google explained how its AI can handle difficult situations when booking an appointment or reservation isn’t straightforward. In this demo, a male voice calls up a restaurant to book a reservation for four but language barriers create some misunderstanding. The AI effectively simplifies things to reach its desired outcome.

You can watch both demos in the video below.

“The technology is directed towards completing specific tasks, such as scheduling certain types of appointments,” the company wrote in its blog post that further describes the system. “For such tasks, the system makes the conversational experience as natural as possible, allowing people to speak normally, like they would to another person, without having to adapt to a machine.”

Pichai said Google has been working on the technology for years, and it’s still in development. A stripped down version will be tested in the “coming weeks” that calls up restaurants during holidays to determine what their hours are. Once Google has that data, it can make adjustments to Google Search and Maps so individuals don’t flood businesses with phone calls.

Duplex is easily one of the most impressive displays of AI we’ve ever seen. Yes, it was Google’s own DeepMind that created an AI capable of beating the world’s best Go players, but that system had an extremely limited set of abilities. Duplex, which was made with natural languaging understanding, deep learning, and text-to-speech capabilities, has the potential to have a significant impact on people’s day-to-day lives.

As impressive it may be, there is something inherently unnerving about a robot voice being nearly indistinguishable from a human. Google is aware of the moral implications of its technology and is working on a solution.

“It’s important to us that users and businesses have a good experience with this service, and transparency is a key part of that,” the company wrote. “We want to be clear about the intent of the call so businesses understand the context. We’ll be experimenting with the right approach over the coming months.”

Google Duplex isn’t perfect. There were moments in the demo where you could pick out some anomalies in its tone or responses that weren’t exactly in line with what was asked. Google acknowledged that while Duplex is “capable of carrying out sophisticated conversations” and “completes the majority of its tasks fully autonomously, without human involvements” there are tasks it can’t complete, which are handed off to a human operator.

Regardless, if Google releases the AI with the level of accuracy it showed off today, it would mark a significant leap in the complexity of AI-based features available to average smartphone users.