Researchers in Europe are testing what they call the first “World Wide Web for robots,” BBC reported.

At present, the name is somewhat of an exaggeration. The project, dubbed RoboEarth, is currently being tested with only four robots. They share information with one another on a common cloud network, much like humans do on the Web.

The robots are being tested in a hospital environment at Eindhoven University, where the system was developed in conjunction with four other European schools. The robots use RoboEarth to exchange information about their environment in order to perform tasks such as serving patients drinks.

“A task like opening a box of pills can be shared on RoboEarth, so other robots can also do it without having to be programmed for that specific type of box,” Rene van de Molengraft, the project’s leader told the BBC.

According to the BBC, the eventual goal of RoboEarth is to become a “common brain for machines.”

Van de Molengraft explained that the one current problem RoboEarth attempts to solve is that the environment changes so quickly that a machine’s programing quickly becomes outdated and useless. By connecting robots that can collect data (some may say “learn”) about the environment, they can adapt to changing circumstance.

Far-off scenarios about self-aware hive minds aside for the moment, RoboEarth may right now teach us as much about ourselves as the future of machines.

A brief look through the android, actroid, humanoid robot history of science reveals that we have often conceived of robots in our own image. Decades ago, those robots were modeled after the idea of self sufficiency. Even IBM’s Jeopardy-conquering Watson was built with a massive database of static knowledge. But now, as we have moved much of our knowledge base onto the Web, where it is queryable and accessible to everyone, such a model for robots seems outdated. In that sense, RoboEarth is indicative of of how our notions of knowledge have changed in the past decade.

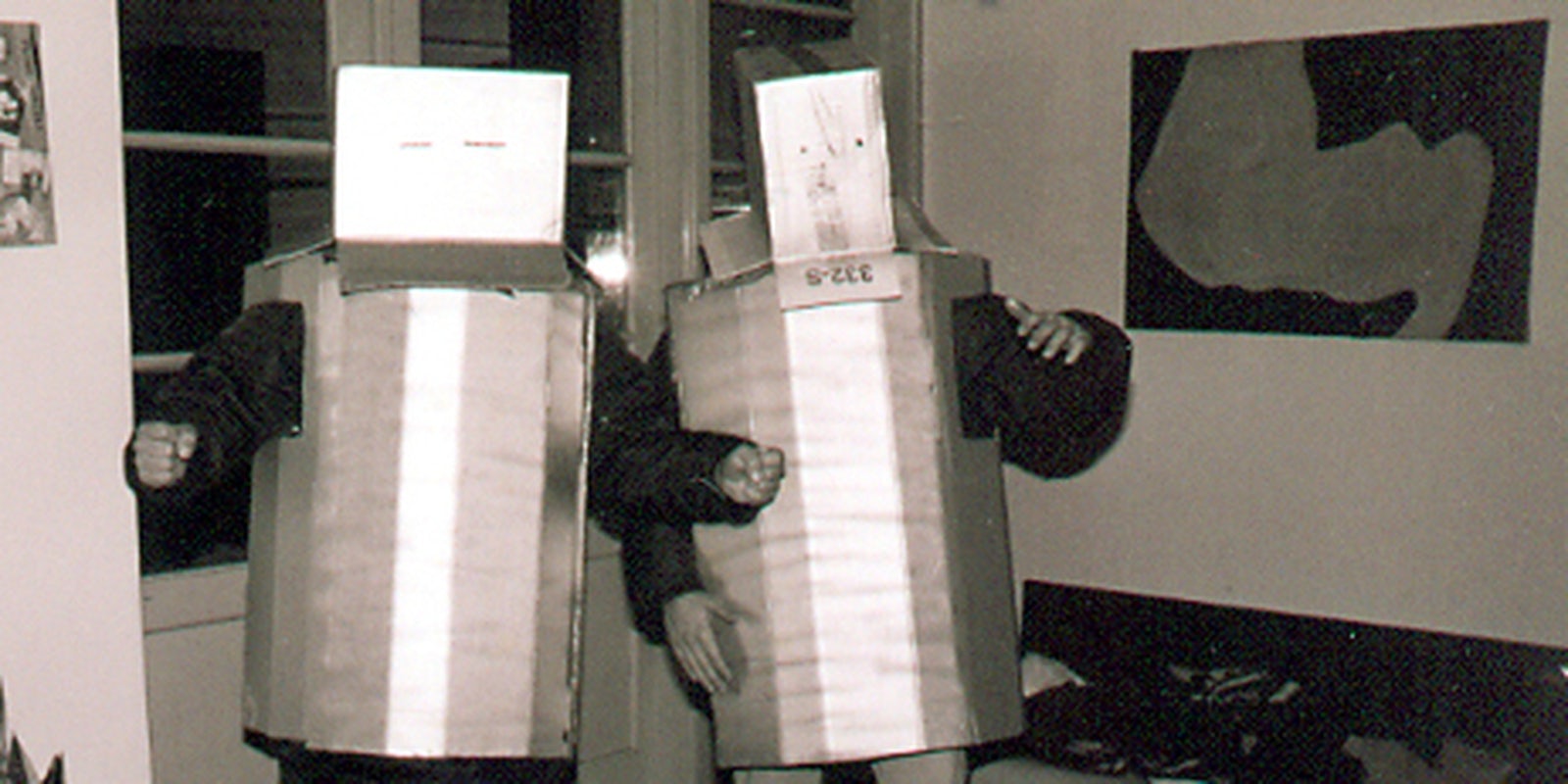

Photo by Tarkowski/Flickr