In its rush to rid itself of disgusting comments about young children on its site, YouTube said in a series of tweets on Thursday that videos could be stripped of advertising revenue if the comment section is deemed inappropriate. Now, some YouTubers are worried that this new policy leaves them open for abuse.

Earlier this week, major advertisers like Disney, Nestle, and Epic Games pulled their money out of YouTube after a content creator named MattsWhatItIs discovered a “softcore pedophile ring” via a “wormhole” on the site.

Some YouTubers have bashed MattsWhatItIs, whose real name is Matt Watson, for potentially hurting their livelihoods by demanding advertisers stop spending money on YouTube and for using the discovery of potential child abuse as a way to make a bigger name for himself.

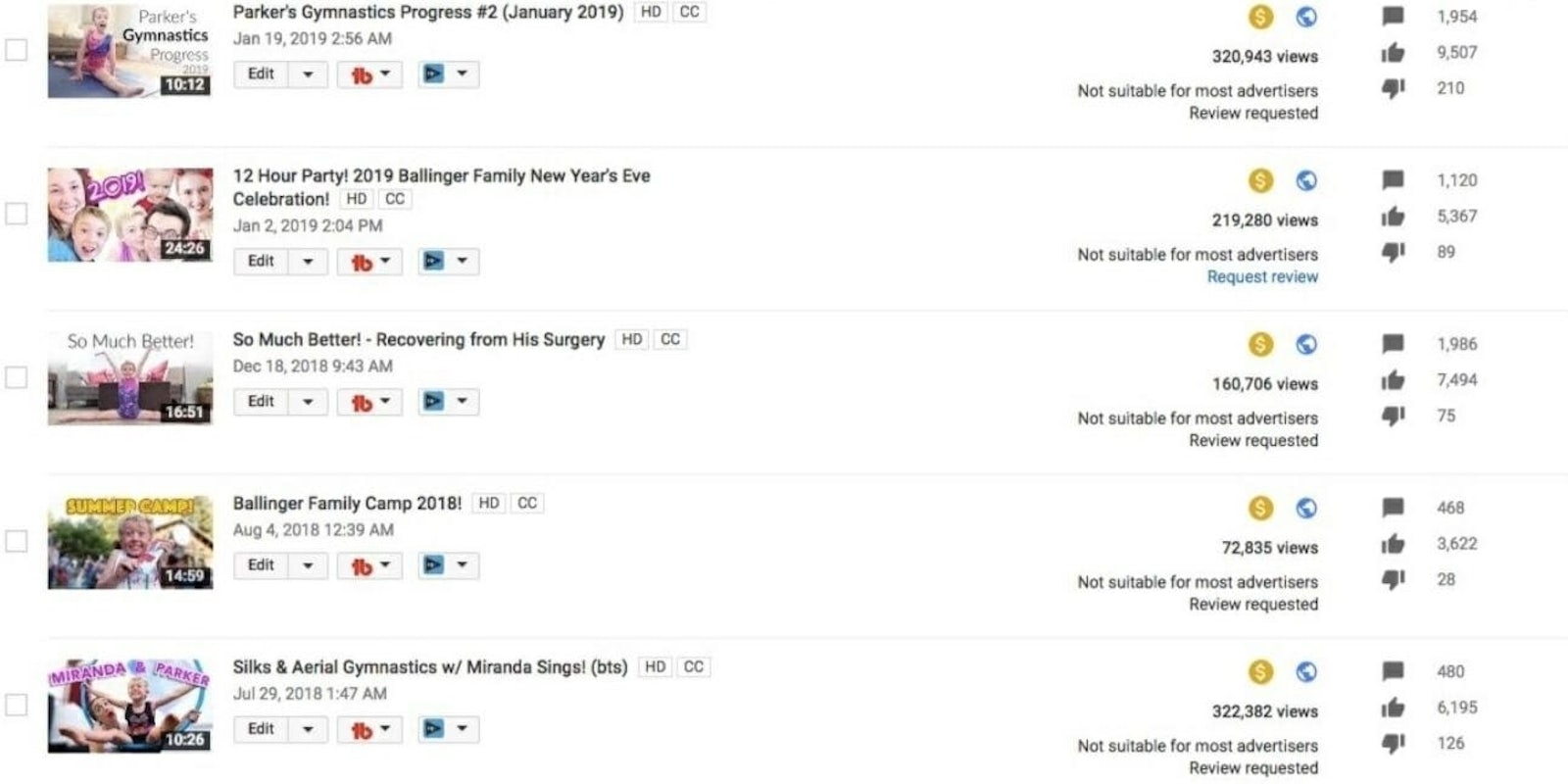

On Thursday, Jessica Ballinger—whose YouTube channel, BallingersPresent, has more than 1.2 million subscribers—tweeted that a video of her 5-year-old son doing gymnastics had been deemed inappropriate by YouTube. That means the channel can’t earn money on a video with more than 300,000 pageviews.

MY 5 YEAR OLD SON: does gymnastics and is a happy, sweet, confident boy.

— Jessica Ballinger (@BallingerMom) February 21, 2019

youtube: NOT ADVERTISER FRIENDLY

(This happened yesterday— I find the timing on this very, very disheartening for the @YouTube community) pic.twitter.com/pewLkljFpI

In its response to Ballinger, YouTube pointed to its previous statement in which it said that in the previous few days, it had disabled the comment section on tens of millions of videos, had terminated more than 400 channels due to inappropriate comments, and had contacted law enforcement.

YouTube also wrote on Twitter, “For reference, over the past few days, we’ve taken a number of actions to better protect the YouTube community from content that endangers minors. With regard to the actions that we’ve taken, even if your video is suitable for advertisers, inappropriate comments could result in your video receiving limited or no ads (yellow icon).”

That response was a problem for many of the YouTubers following the Twitter thread, however, because a troll could spam an appropriate video’s comment section and eventually make it inappropriate for advertisers, costing the YouTuber their ad revenue.

Ballinger wrote, “I have a HIGHLY monitored comments section and many say it is the kindest on YouTube. This makes NO SENSE. Remove the few comments and ban the user.” YouTube responded that these recent actions were due to an “abundance of caution.”

Not all channels do moderate and we’ve had to take an aggressive approach and more broad action at this time. We’re also investing in improving our tools to detect/remove this content, so we rely on your moderation less.

— TeamYouTube (@TeamYouTube) February 22, 2019

Many others took Ballinger’s side, saying that YouTube was punishing creators for user comments that are out of their control.

One Twitter user wrote, “So if anyone brigades and comments on a video they don’t like, with offensive language, the video creator will be punished? That sounds like a foolproof plan.”

EnterElysium, who has 250,000 YouTube subscribers, wrote, “You must be aware of the power of fandoms and groups and how that makes that both untenable and unprofitable for anyone who falls afoul of them. Let alone the active hate mobs.”

YouTube star Keemstar said one way to deal with potential abuse is for YouTubers to disable the comments on their videos when they’re uploaded, but others commented that could hurt a video’s reach and its pageviews (which then would hurt the creator’s wallet, anyway).

YouTube did not immediately reply to a Daily Dot request for comment on how they can mitigate the risk of a potential bad actor with bad intentions trying to spam the comment section of an appropriate video to demonetize the content.

But it’s clear that YouTube’s issues are far from over. Whether it’s self-harm videos on the YouTube Kids app or young teens participating in ASMR videos, it’s so far proved impossible for YouTube to keep its site completely safe for children.

Update 11:14am CT, Feb. 22: A YouTube spokesperson told the Daily Dot on Friday that the platform has deemed it necessary at this point to limit the ads on videos that could be at risk for predatory comments. YouTube also said it isn’t looking at entire channels for limited ads. Instead, only individual videos are facing that scrutiny.

YouTube did not respond to an initial question about the potential for trolls to harass content creators and impact their ad revenue.

READ MORE:

- The most-viewed YouTube videos of all time

- The 25 most-subscribed-to YouTube channels

- The best documentaries on YouTube