Looks like FaceApp, the new face-morphing craze, already needs a makeover.

Launched in January and hailing from Russia, FaceApp is catching interest for its artificial intelligence technology and intriguing filters that can make you look older, younger, or a different gender. It uses "deep generative convolutional neural networks" to edit selfies in a way that looks real, like adding small details around your eyes and mouth to create a natural grin.

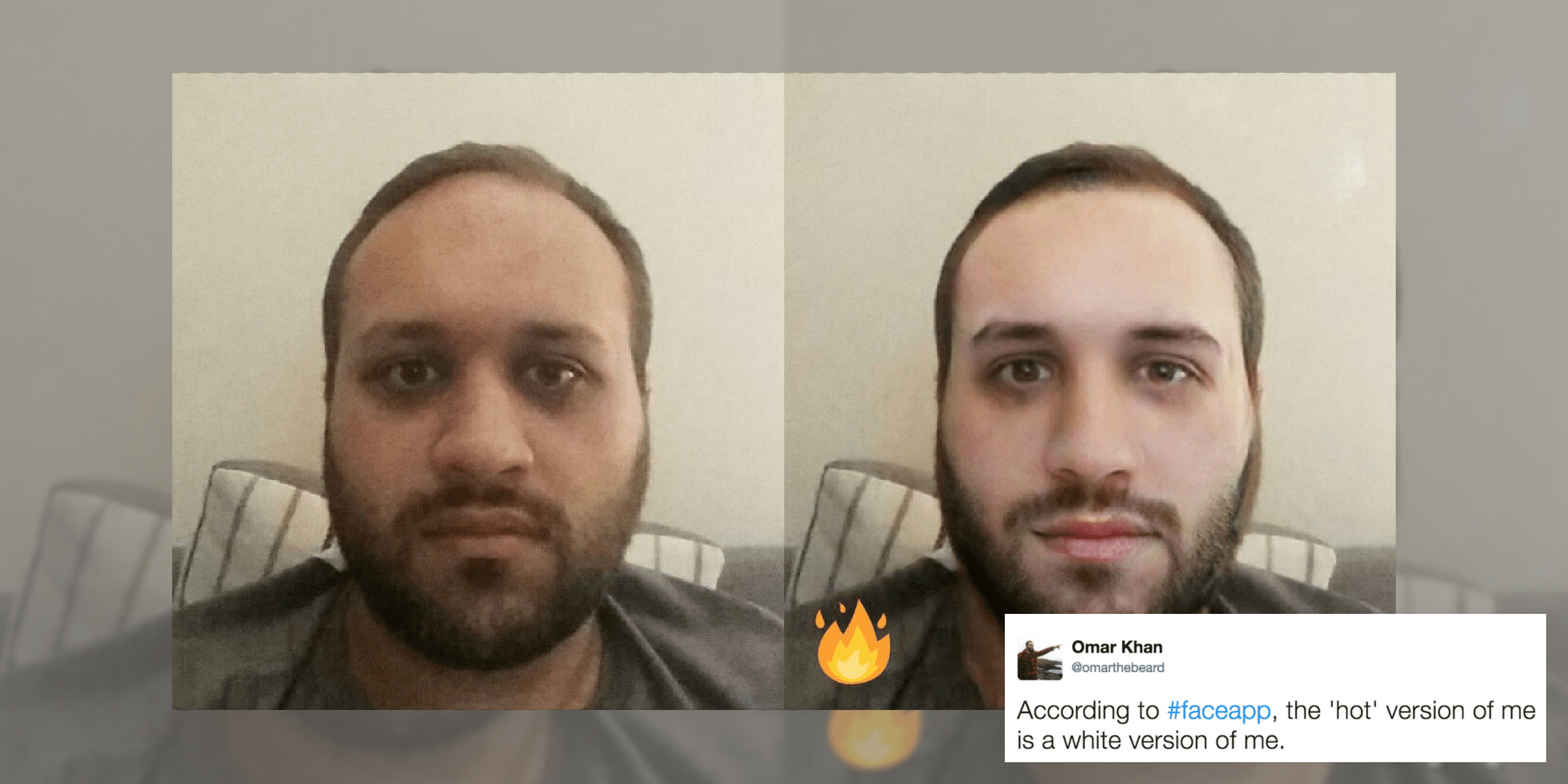

Unfortunately, the app also makes you whit- I mean, hotter, too. Check out what happens when you use the "hot" filter.

https://www.instagram.com/p/BTTxi0Gg-dL/

Why does #faceapp hot feature make me white? ? pic.twitter.com/OsSs5wlUSX

— PLANET LYNX (@PLANETLYNX) January 28, 2017

I really like #faceapp. The teeth as well as their training set for "hot" people are too white to suit me ???? pic.twitter.com/WcytATawp6

— Arjun Sundararajan (@sarjun) January 31, 2017

Faceapp made me hot by turning me white...cool #FaceApp pic.twitter.com/JFxSsjIsXj

— Dark Bomber (Eddie) (@DarkBomberX) April 10, 2017

https://twitter.com/MarachiiSoup/status/851962932149833728

https://www.instagram.com/p/BTB2xC7A32c/?taken-by=kharyrandolph

The app also appears to completely change certain features under that same "hot" filter, removing glasses, as well as masking faces with European features.

#faceapp removes glasses and replaces eyes. With white people eyes. :| pic.twitter.com/mUgrcrXds1

— Three Small Guillotines in a Trenchcoat (@littlebunnyfu) April 18, 2017

#faceapp isn't' just bad it's also racist...? filter=bleach my skin and make my nose your opinion of European. No thanks #uninstalled pic.twitter.com/DM6fMgUhr5

— Terrance AB Johnson (@tweeterrance) April 19, 2017

The #FaceApp idea of making me hot is making me white. That's racist as hell pic.twitter.com/6z3kcLn42V

— dco (@The_MiddleC) April 21, 2017

https://twitter.com/omarthebeard/status/855528946808623105

Faceapp teaches us: White is hot. #faceapp #racism pic.twitter.com/V7Vgzq67U0

— Matti Hernesaho (@hernesaho) April 23, 2017

Of course, this visible lightening under a filter called "hot" insinuates several things: that Eurocentric features such as narrow noses and white skin are the ultimate standard of hot, and that being any shade of darker skin is decidedly not hot at all, perpetuating this idea that skin color determines a person's value.

FaceApp CEO Yaroslav Goncharov has since apologized for the app's whitewashing, issuing a statement to the FADER acknowledging the app's criticisms.

"We are deeply sorry for this unquestionably serious issue," Goncharov's statement read. "It is an unfortunate side-effect of the underlying neural network caused by the training set bias, not intended behavior."

Much like Snapchat's pitfalls of "whitewashing filters" and Google Photos' automatic classification of pictures of black people as animals, it appears that FaceApp's "training set bias" issue isn't unique to the new app, nor will be the last time we encounter a racist face-detection feature. But Goncharov has already launched an attempt to reverse this offense.

The founder and CEO told the FADER that a fix is being done on the feature, but in the meantime, FaceApp's "hot" filter will be called "spark" to erase any positive connotations. The change has already taken affect within the app, though it appears that the iPhone app was last updated April 17—and the "spark" filter still lightens skin.

H/T the FADER