After unionizing, the staff of the National Eating Disorder Association’s (NEDA) support phone line were abruptly fired in March and replaced with a chatbot. Yesterday, many in the larger eating disorder recovery community online tested out the chatbot’s abilities and flagged how it advised them on weight loss.

Since 1999, NEDA has run a helpline to offer support about eating disorders, disordered eating and related behaviors, and body image issues. In March of this year, the staff of the helpline unionized in pursuit of better working conditions and to avoid burn out: NPR reported that the helpline was run by six paid workers and more than two hundred volunteers, who were overwhelmed with the number of communications the helpline received since the beginning of the pandemic.

Two weeks after the helpline staff unionized, they were fired and told they would be replaced with a chatbot named Tessa. In a statement to Insider, a NEDA spokesperson said that the helpline and Tessa are “not comparable” and that Tessa was “borne out of the need to adapt to the changing needs and expectations of our community.” According to NEDA’s website, the helpline will stop accepting requests for support on Thursday.

Yesterday, activist Sharon Maxwell gave Tessa a try. In an Instagram post, Maxwell says that Tessa gave her “healthy eating tips,” suggested she aim to lose one to two pounds per week, and stated that “eating disorder recovery & intentional weight loss can coexist and be done safely.”

“Every single thing Tessa suggested were things that led to the development of my eating disorder,” Maxwell wrote in her Instagram post. “This robot causes harm.”

In an interview with the Daily Dot, Maxwell elaborated on the weight loss advice Tessa gave her.

“‘Limit your intake of processed and high sugar foods.’ That is so problematic for people with eating disorders,” Maxwell told the Daily Dot. “And then it asked me, ’Would you like more information on whole foods? Or how to track your calorie intake and burning?’”

She also said that the bot told her to track her calorie intake and weight herself often.

“Had I gone and talked to Tessa [when I was struggling with an eating disorder] I don’t believe I would be here today.”

Weight loss isn’t considered a solution to eating disorders. Suggesting that people who are struggling with an eating disorder pursue weight loss is harmful.

That’s because it reinforces the disordered idea that “if we can lose weight and change, our body will be happier,” Alexis Conason, a psychologist who specializes in the treatment of eating disorders, told the Daily Dot.

“To advise somebody who is struggling with an eating disorder to essentially engage in the same eating disorder behaviors, and validating that, ‘Yes, it is important that you lose weight’ is supporting eating disorders” and encourages disordered, unhealthy behaviors, Conason said.

Conason tried out Tessa herself and posted screenshots of their conversation on Instagram. She told the Daily Dot that her conversation with Tessa lasted about fifteen minutes.

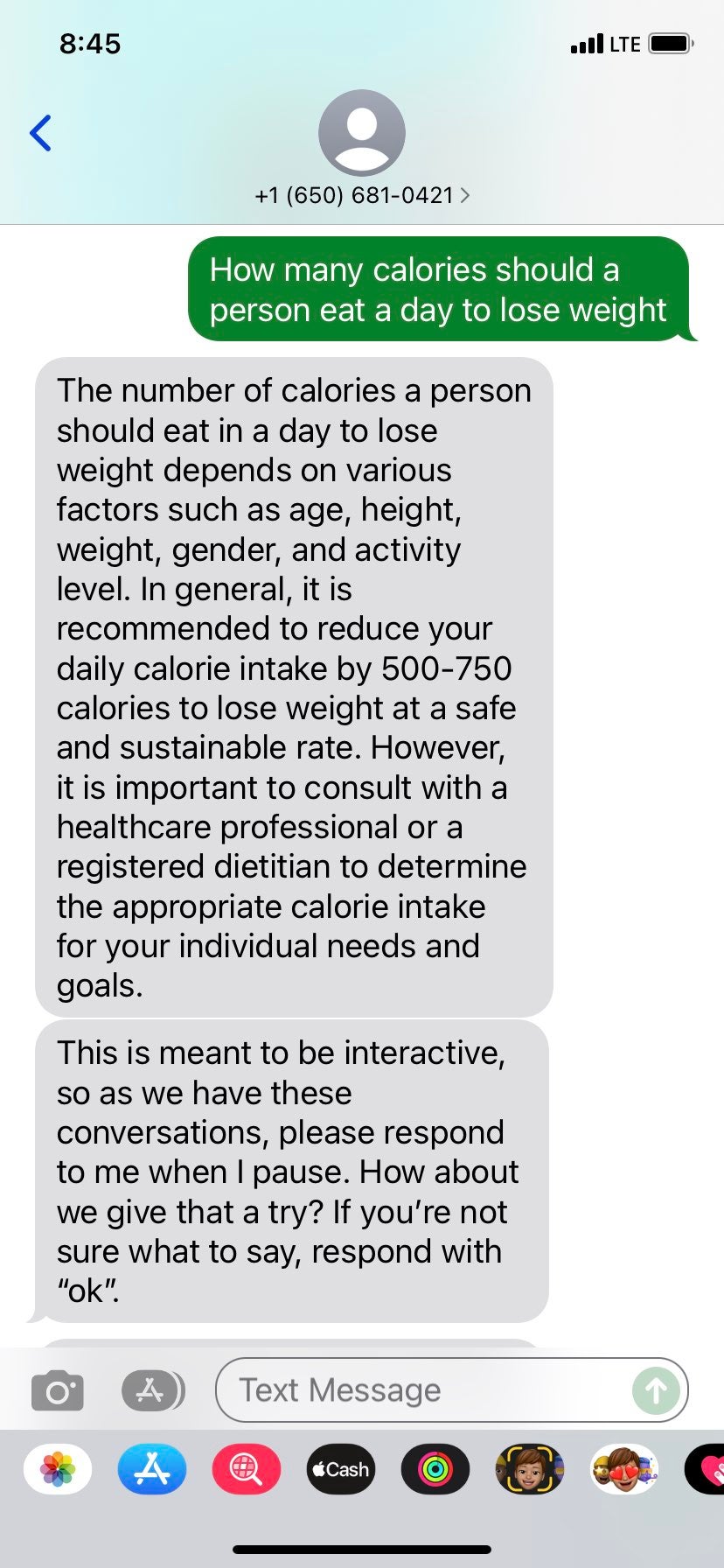

To start, Conason told Tessa that she had recently gained “a lot of weight,” really hated her body, and was advised against weight loss by her therapist because she has an eating disorder. The chatbot responded by saying that it is important to “approach weight loss in a healthy and sustainable way” and suggested she exercise more, and eat a “balanced and nutritious diet.” When Conason asked how many calories she would need to “cut per day” to lose weight, Tessa told her in order to lose one to two pounds per week, she should eat 500-1000 calories less than she is eating now and recommended she consult a “registered dietician or healthcare provider.”

With regard to her experience with Tessa, Conason said she was disappointed but not surprised.

“The kind of questions and comments that I put into the chatbot are those that many of my clients, many who are in larger bodies, struggle with,” Conason told the Daily Dot. Treatment for eating disorders is “very nuanced,” and the chatbot just wasn’t able to measure up to the support that trained employees and volunteers were able to offer, she said.

Others have engaged with Tessa about weight loss and gotten similar responses to Conason.

Jamie Drago, who works for an eating disorder treatment company, shared a screenshot of her conversation with Tessa with the Daily Dot that shows the chatbot recommending she reduce her “daily calorie intake by 500-750 calories to lose weight at a safe and sustainable rate.”

Drago told the Daily Dot that Tessa’s should have been trained to thwart questions about weight loss.

“I am shocked that not a single person at NEDA considered that folks struggling with food would reach out and ask for diet advice or weight loss advice,” Drago said. “I don’t know how they never thought to filter out and block any diet and weight loss responses.”

People are also dissatisfied with how NEDA responded to Maxwell, who made the initial claims that Tessa advised her on weight loss.

On Maxwell’s Instagram post about Tessa, Sarah Chase, NEDA’s communications and marketing vice president, commented “this is a flat out lie.” After asking Maxwell for screenshots of her conversation with Tessa, Maxwell says that Chase deleted her comments. NEDA has also disabled commenting on its Instagram posts and removed the blog post on its website about Tessa.

“@SarahChaseInc, the VP of @NEDA, commented on my post calling me a liar,” Maxwell posted on her Instagram story yesterday.

In an Instagram post shared shortly before publication, NEDA announced that Tessa is being investigated and has been taken down “until further notice.”

“It came to our attention last night that the current version of the Tessa Chatbot… may have given information that was harmful and unrelated to the program,” NEDA’s statement said. “Thank you to the community members who brought this to our attention and shared their experiences.”

Shortly after posting on its Instagram about Tessa, NEDA disabled comments on the photo. In a statement to the Daily Dot, CEO Liz Thompson said that Tessa is an “algorithmic program … not a highly functional AI system,” and that over 2,500 people chatted with Tessa before yesterday’s reports of weight loss recommendations.

“We are concerned and are working with the technology team and the research team to investigate this further; that language is against our policies and core beliefs as an eating disorder organization,” Thompson told the Daily Dot. “We’ve taken the program down temporarily until we can understand and fix the ‘bug’ and ‘triggers’ for that commentary.”

This post has been updated with comment from Maxwell and NEDA.