Go is a board game that originated in China more than 2,500 years ago. It is played by 40 million-plus worldwide and is considered one of the four essential arts required of a true Chinese scholar.

And Google’s DeepMind branch just created one of the greatest to ever play.

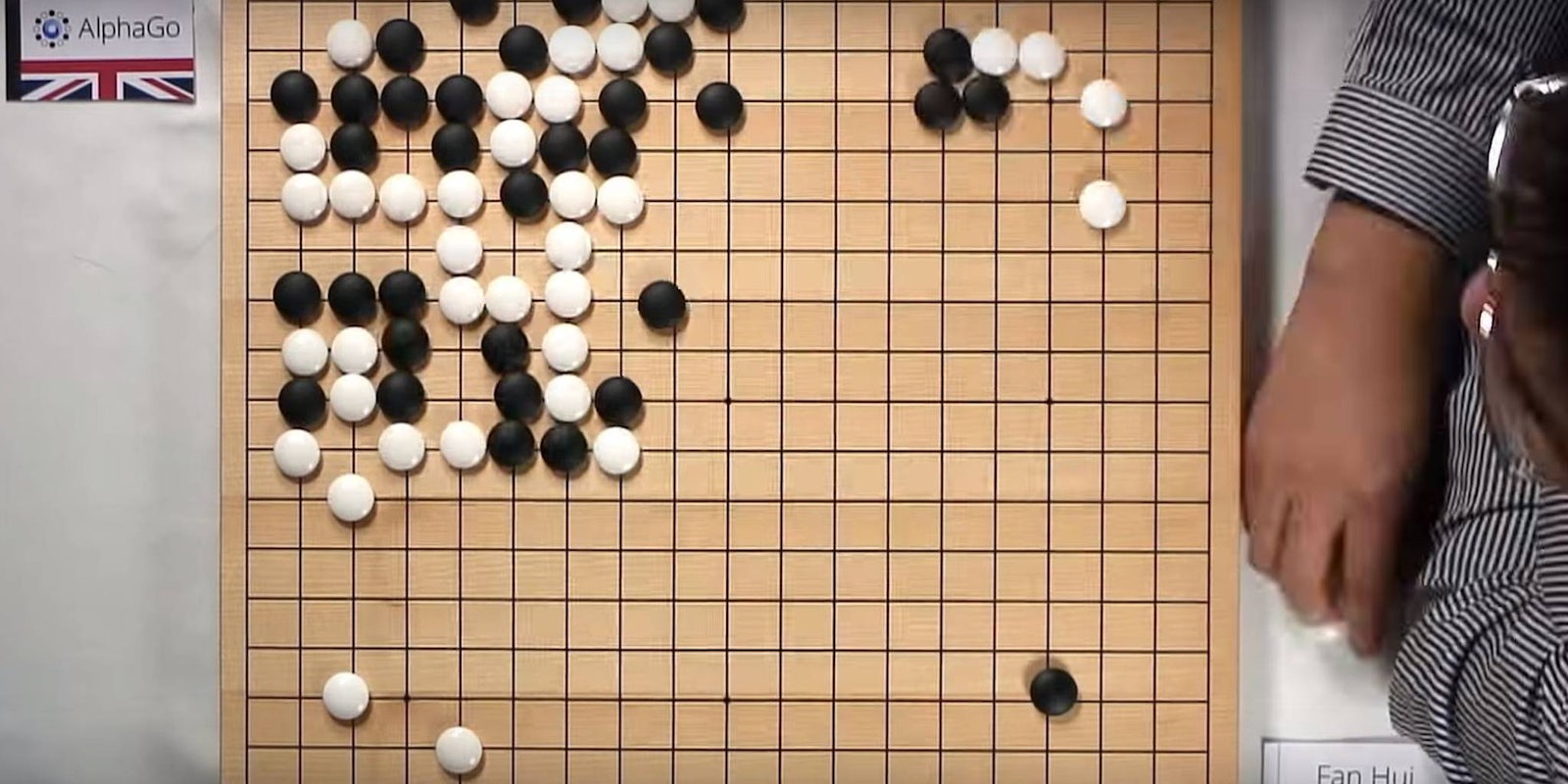

Last year this company defeated Europe’s three-time Go champion. Fan Hui lost to the AlphaGo computer in October behind closed doors, five games to zero. It was the first time a computer has ever beaten a professional Go player—and before doing so it won 499 of 500 games against other artificial intelligence devices.

The rules of Go are actually pretty simple: The game is traditionally played on a 19-by-19 grid where players place either black or white stones on each intersection of the lines. The goal is to fill as many intersections as possible while capturing opponent player’s stones by surrounding them on all sides, or setting up points of territory so the opponent cannot enter.

What makes AlphaGo’s victory so compelling is the complexity of the game. There are just about countless more ways—googol (1×10^100), to be exact—to position your pieces in Go than in chess. (It’s a number greater than the number of atoms in our universe.)

Google DeepMind tackled this complexity with an advanced tree search and deep neural network. The company trained the neural network by feeding it 30 million moves made by Go experts. This allowed AlphaGo to predict the opponent’s next move. The next step was to get AlphaGo to make its own decisions by making it play thousands of games, readjusting every time.

This is not the first time we have seen human experts defeated by machine-learning computers. IBM’s Deep Blue defeated Garry Kasparov, the reigning world champion of chess, in 1997; in 2011 Watson defeated Ken Jennings, the best Jeopardy contestant of all time.

This is not the end of AlphaGo. Google DeepMind will challenge legend Lee Sedol, the greatest Go player of the past decade, in March. We will keep our eyes on the match and find out if the best of humankind loses another strategy game to our own creation.

H/T Engadget | Screengrab via DeepMind/Youtube