If you’re thinking about gussying up some nude images of yourself through artificial intelligence filters, then don’t. At least, that’s according to cyber security engineer and self-proclaimed ‘ethical hacker’ by the name of Brains (@brains933) on TikTok. The creator has warned folks in a viral post about the dangers of putting pictures of your body through an AI imaging program.

Applications like Dall-E, NightCafe Creator, DeepAI, HotPotAI, and others are being utilized by folks online to create computer-generated art out of either images, keywords, or a combination of both. Some of the results that these generators produce are absolutely stunning, but as is the case with a lot of freely accessible technology, there’s a downside.

TikTok has jumped on the trend by adding a new filter titled “AI Art” that produces a green screen of text-to-image generated pictures that some folks are combining with nudes of themselves to produce some truly cool and unique photos.

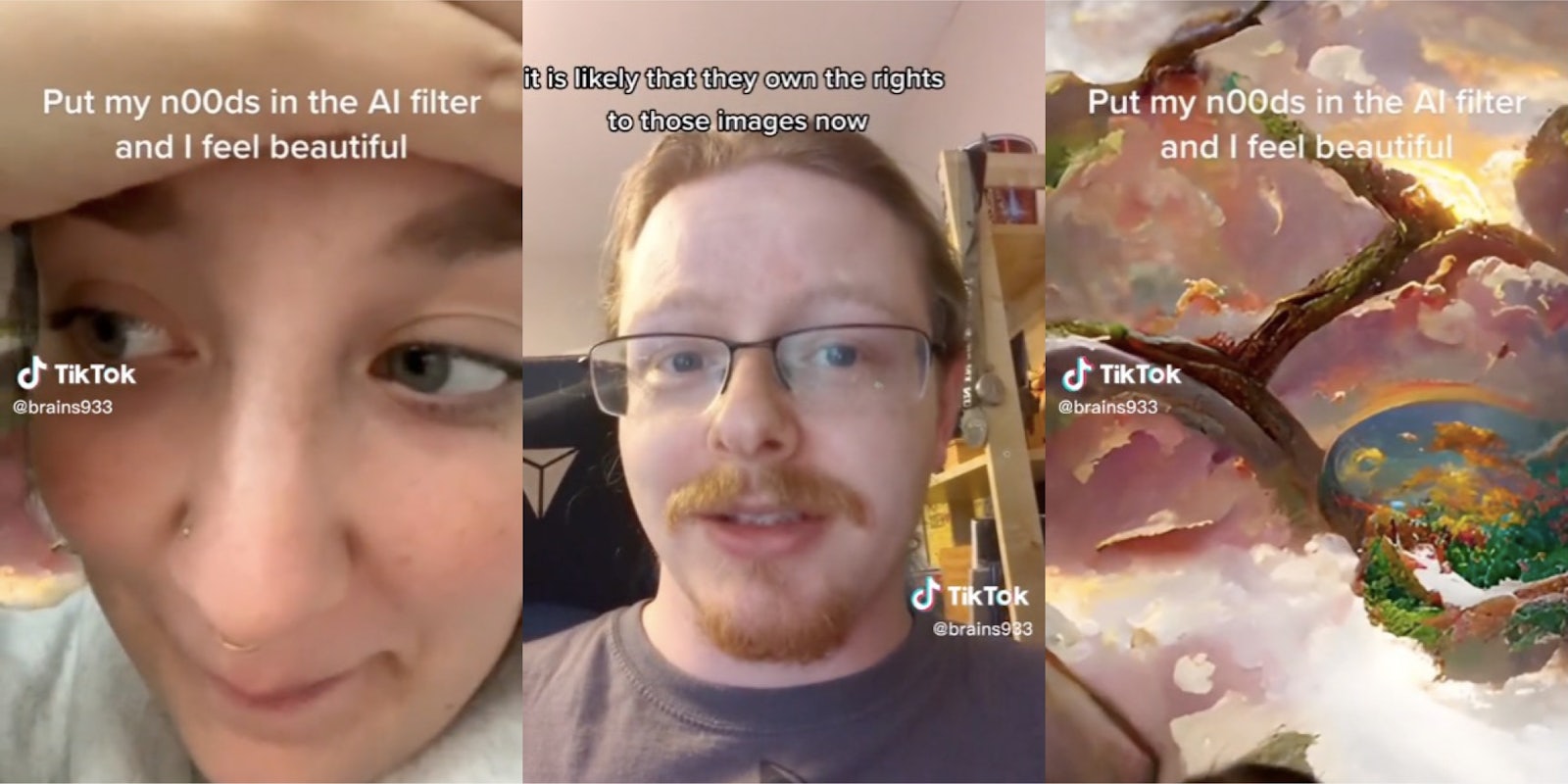

Brains’ video stitches a video uploaded by TikToker Grace, who shared an AI-generated result she was left with after feeding nude photos of herself into the generator. What resulted was a piece of art that looked like some kind of alien, acid-trip vision of what heaven looks like, and it’s simply stunning.

But that gorgeous visual was quickly cut short as Brains appears on the screen.

“These are all crazy,” he starts. He explains how when people upload a photo of themselves to the AI filter, it’s “going somewhere to get processed.”

“It’s being processed on TikTok servers on their computers and likely being stored there,” he continues. “There’s a big database somewhere in TikTok with all of these images in it. And if you read through your terms and conditions of TikTok, it is likely that they own the rights to those images now. And they can do with them whatever the hell they want.”

@brains933 #stitch with @Grace #ai #cybersecurity #infosec ♬ I Think I Like When It Rains – WILLIS

So how valid are Brains’ claims that TikTok pretty much owns all of the content that you upload on the application? While your videos and content are protected under the app’s licensing agreement, Brownwinick Law states that by using the app, then users are allowing TikTok to utilize their content, and the images and videos uploaded to them, a “broad license” to utilize as TikTok sees fit.

In addition, TikTok has been under close scrutiny from the FCC recently for being a national security risk, with the government agency telling decision makers to ban the application from being downloaded and used by U.S. citizens. This is more than likely because TikTok has been found to monitor and collect user data. The app, which is owned by ByteDance, is also seen as a danger by U.S. government officials since the Chinese Communist Party owns a sizable stake in it.

While it’s difficult to imagine that uploading a nude photo of yourself to TikTok correlates to national security wariness, many users weren’t exactly thrilled with the idea of their photos sitting on a server somewhere where employees could potentially access them.

“The absolute ignorance/nonchalance of that creator to the possible effects of this,” user commented on Brains’ video.

“It’s beyond scary to me that this isn’t common knowledge yet,” another person wrote. “People really should thing twice and look into things.”

“When using something is free, you’re usually the product,” one top comment read.

“Keep spreading this info please – not enough kiddos/teens/even adults are aware of this clearly,” a user urged

Others, however, seemed to be skeptical at the severity of using the filters.

“So they probably own a few billion photos which nobody even looks at or cares about,” a user wrote.

“Tbh people who use Tiktok already don’t care about cyber security or privacy,” another said.

Still, many viewers thanked Brains for his PSA regarding the filter.

As one user wrote, “you’d think people would know better at this time and age of internet tbh but here we are [I guess.]”

The Daily Dot has reached out to both TikTok and Brains via email for further information.