Perhaps the machine uprising begins on the chessboard. At London’s Imperial College, one student used artificial intelligence to train his computer to be one of the best chess players in the world—and it only took three days.

The self-taught chess engine, known as “Giraffe,” was designed by graduate student Matthew Lai. Computers can already squash human opponents at chess by using their great computational speed to calculate all possible moves in a game and their likelihood for ultimately yielding checkmate—they generally make the best possible move every time. Lai’s Giraffe solves the same problem from a different direction: it learned to play chess without knowing the rules at all.

Deep learning helps computers makes sense of the world by identifying patterns in large quantities of data. Most programmers might hard-code the rules of chess into their computers, but Lai fed Giraffe millions of sample chess positions from a computer database, then added any legal move to each position for the sake of additional randomness. Before it ever played its first game, Giraffe “studied” 175 million chess positions generated this way, building its own understanding of the 1,500-year-old game.

Then Lai trained Giraffe at supernatural speed, making it only better at its narrowly defined task. He put it up against the Strategic Test Suite, a collection of 1,500 chess puzzles designed to assess a chess engine’s capabilities in a variety of situations. Giraffe ran the puzzles continuously for three days, getting slightly better with every iteration. Eventually it scored 9,700 out of a possible 15,000 points—a score Lai says is on par with the best chess engines in the world.

Giraffe employs a neural network to identify the best possible move in a given chess position. Such networks are a computer-friendly tool for making decisions in a manner similar to the human brain’s. They are already effective tools for building intelligent-seeming systems that operate in a limited space, like controlling a robot’s movements to recognizing an image. Chess, with its limited parameters that allow for fantastic complexity, presents itself as a unique testing ground for technology like this.

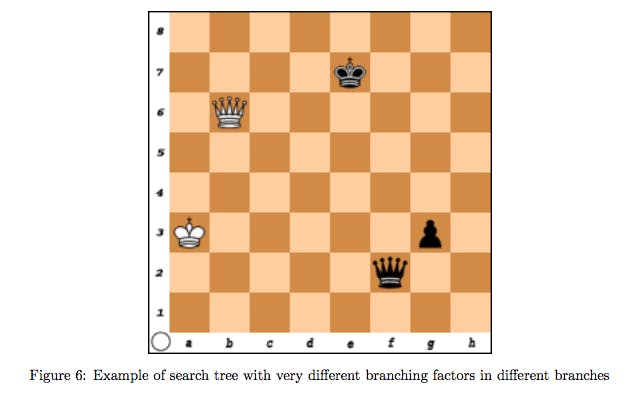

Giraffe’s algorithms processes all of chess as three “input layers.” The first is the state of the game at large: whose turn is it, how many pieces does each side have, who can or cannot castle? The second layer considers the physical location of each player’s pieces on the board, and the third builds a map of attacked and defended territory. Giraffe’s neural network gathers potential moves, mapping its options into branches to sort its “thinking.”

It is then capable of “deciding which branches are most ‘interesting’ in any given position, and should be searched further, as well as which branches to discard,” Lai writes in his paper introducing the chess engine. In human terms, this artificially intelligent system plays chess at FIDE International Master level, putting it among the top 2.2 percent of human chess players.

“Unlike most chess engines in existence today, Giraffe derives its playing strength not from being able to see very far ahead, but from being able to evaluate tricky positions accurately, and understanding complicated positional concepts that are intuitive to humans, but have been elusive to chess engines for a long time,” Lai told MIT Technology Review. “This is especially important in the opening and endgame phases, where it plays exceptionally well.”

A perhaps fatal flaw to this system: it is slow. Neural networks take more time than others to arrive at a conclusion, and Giraffe takes about 10 times longer to make a move than other conventional chess engines might.

If the machine uprising does indeed begin on the chessboard, we have time to run away for now.

H/T MIT Technology Review | Photo via Ian T. McFarland/Flickr (CC BY SA 2.0)