In the ongoing fallout from Facebook’s controversial “emotional contagion” study, the social network and its defenders have relied heavily on claims that a section buried deep inside Facebook’s terms of service gave the company permission to utilize user data for research purposes.

It now seems, however, that Facebook didn’t even go that far before deciding to let researchers tamper with the emotions of nearly 700,000 users by manipulating their News Feeds to either show mostly positive or mostly negative updates from friends.

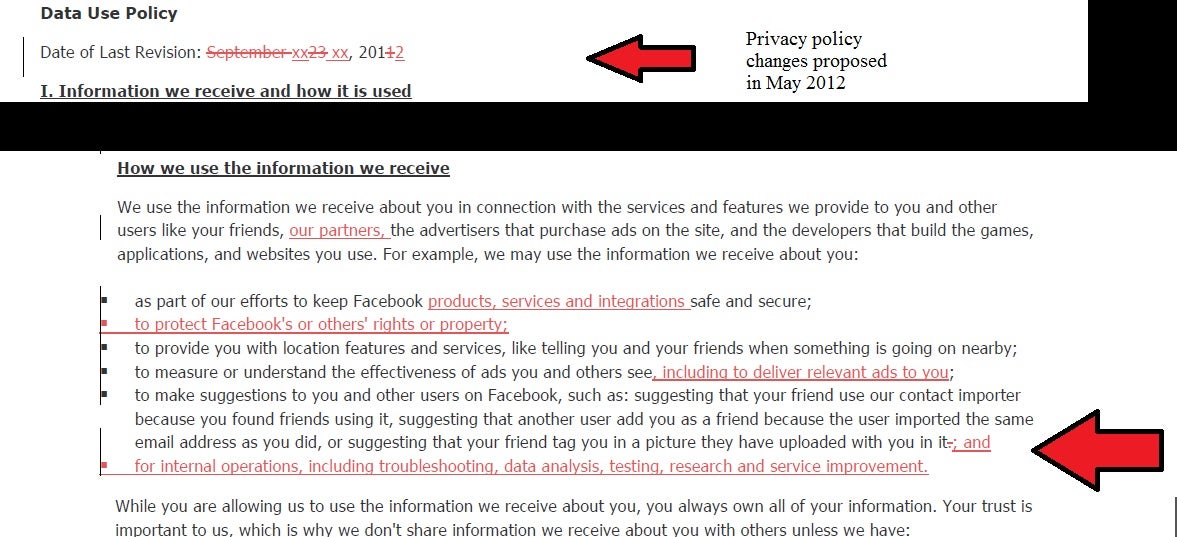

Forbes reports that ToS language granting Facebook the permission to take user information and use it for “internal operations” that included “research,” was not added until May of 2012—four months after the experiment was conducted.

In a screenshot taken by Forbes, the relevant change is clear. In May of 2012, Facebook added the line about how it might use data “for internal operations, including troubleshooting, data analysis, testing, research and service improvement.”

Screengrab via Forbes

But even if the clause had been part of Facebook’s privacy terms prior to the experiment, it’s still not clear if that gives them proper cover for their actions. Legal experts say there is a big difference between Facebook saying it plans to use user data for research and actually manipulating its users.

“If you are exposing people to something that causes changes in psychological status, that’s experimentation,” James Grimmelmann, a professor of technology and the law at the University of Maryland, told Slate. “This is the kind of thing that would require informed consent.”

Informed consent would have involved telling users about an experiment beforehand with the option to opt-out, then disclosing the specifics of the research as quickly as possible once the experiment had concluded.

In a post published earlier this week, Facebook researcher Adam Kramer, defended the experiment, and insisted that the study impacted a “relatively” small subset of Facebook users.

‟The reason we did this research is because we care about the emotional impact of Facebook and the people that use our product. We felt that it was important to investigate the common worry that seeing friends post positive content leads to people feeling negative or left out. At the same time, we were concerned that exposure to friends’ negativity might lead people to avoid visiting Facebook.”

The backlash against Facebook has been intense in the days since news of the experiment broke over the weekend. Many say this is the “final straw” for a company that has played fast and loose with user privacy in the past.

Photo by kris krüg/Flickr (CC BY-SA 2.0)