THE ANTI-SOCIAL WEB: As we see from the ubiquity of online harassment, shaming, and trolling, the social web is as much about ambiguity and antagonism as it is about sharing, connection, and cooperation. In fact, the Web's most apparently obviously anti-social behaviors—including trolling and shaming—are, strangely enough, also its most social. This series, The Anti-Social Web, will explore this overlap to look at various aspects of social behavior online, from “good” to “bad” and all colors between. Guest curated by Whitney Phillips.

By WHITNEY PHILLIPS

Ask 10 people what they think about Internet trolls and you’ll likely get 10 different answers. One person might focus on harmless pranks, another on the lulz, another still on hateful tweets or harassment, generally. Some might even argue that the category doesn’t exist. The Internet seems to be virtually overrun with trolls—but no one can agree on what that term means.

One reason for this confusion is the relative age of the term, which can be traced back to the early or mid '90s, perhaps even earlier—a lifetime in Internet years. Back then, the term “trolling” first referred to disruptive or otherwise annoying speech and behavior online. These trollers, as they were then called, would clog a particular discussion with non-sequiturs, engage in so-called identity deception, and/or commit various crimes against language and logic.

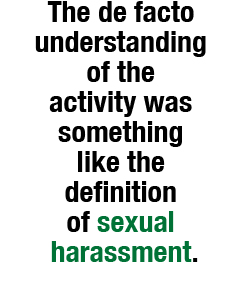

It’s likely that many of these early trollers knew exactly what they were doing, and undoubtedly took a great deal of pleasure from their exploits. Whether or not the aggressors in question meant to upset their targets, however, and whether or not they even identified themselves as trollers, or had conversations about trolling, the behaviors in question were framed almost exclusively in terms of effects. The de facto understanding of the activity was something like the definition of sexual harassment. If a target walked away feeling trolled, then trolling had indeed been afoot.

It’s likely that many of these early trollers knew exactly what they were doing, and undoubtedly took a great deal of pleasure from their exploits. Whether or not the aggressors in question meant to upset their targets, however, and whether or not they even identified themselves as trollers, or had conversations about trolling, the behaviors in question were framed almost exclusively in terms of effects. The de facto understanding of the activity was something like the definition of sexual harassment. If a target walked away feeling trolled, then trolling had indeed been afoot.

The troll space began to shift in the early 2000s, when anonymous users of 4chan’s infamous /b/ board appropriated and subsequently popularized a very specific understanding of the term “troll.” For these users, trolling was something that one actively chose to do. More importantly, a troll was something one chose to be. Over the years, and thanks in no small part to the frenzied intervention of mainstream media outlets, a distinctive subculture began to cohere around the term "troll," complete with a shared set of values, aesthetic, and language. (I talk about this relationship in my article “The House That Fox Built: Anonymous, Spectacle and Cycles of Amplification.”)

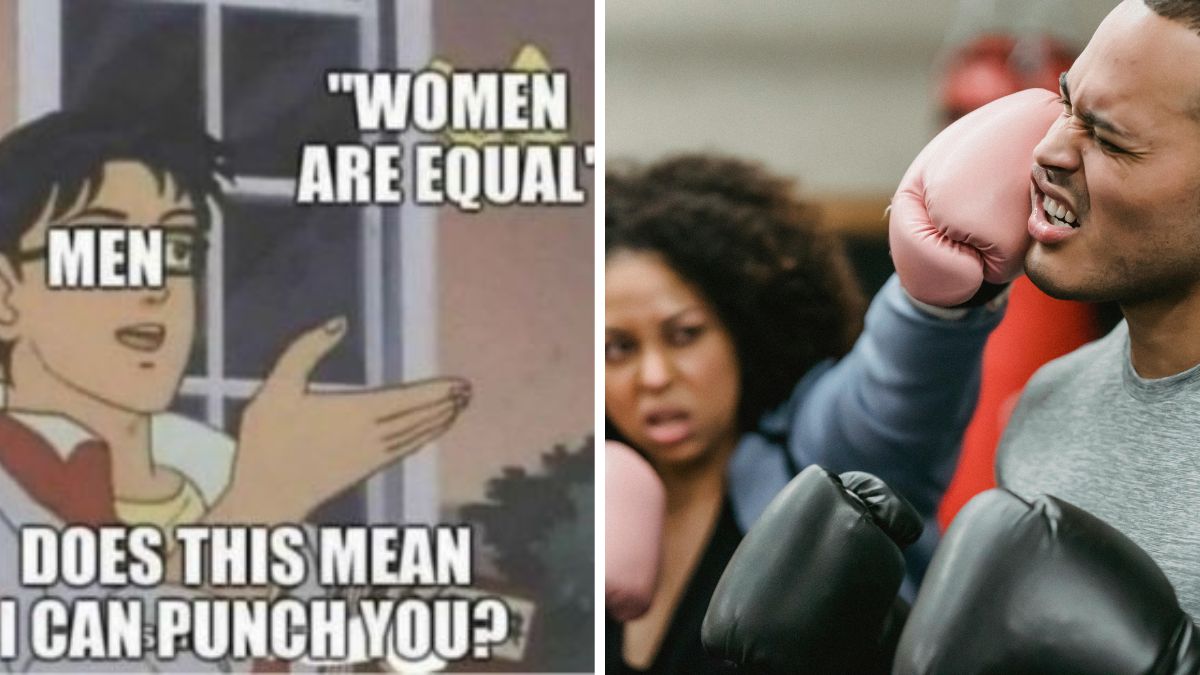

What’s more, trolling in this newly-established subcultural sense, whose most conspicuous characteristic was that participating trolls proudly identified as such, was directly plugged into, and in fact provided a great deal of creative fodder for, a little thing called meme culture. The vast majority of the earliest popular Internet memes—including lolcats, Rickrolling, Advice Dog (which spawned the whole menagerie of Advice Animals), among many others—first percolated in or around the troll space, making trolling an increasingly powerful, but at the time still invisible, cultural force. As memes became more and more prominent, so too did trolling references and trolling behaviors, resulting in what subcultural trolls (that is, trolls of the meme culture/4chan ilk) subsequently lamented as the mainstreaming of Web culture.

Suddenly, trollish references that at one point provided a subcultural Bat Signal—even something, for example, as simple as a troll face—now didn’t mean, or didn’t necessarily mean, the things they used to (Adam Sandler, anyone?). Like so many other references and images once exclusively associated with the troll and early meme space, the troll face has reached the kind of critical mass that renders the image maybe not meaningless, but incredibly difficult to place.

Suddenly, trollish references that at one point provided a subcultural Bat Signal—even something, for example, as simple as a troll face—now didn’t mean, or didn’t necessarily mean, the things they used to (Adam Sandler, anyone?). Like so many other references and images once exclusively associated with the troll and early meme space, the troll face has reached the kind of critical mass that renders the image maybe not meaningless, but incredibly difficult to place.

The same has been true of the very term “troll.” Since the meme and lulz-based variety of trolling first emerged from 4chan’s primordial ooze in 2003, hundreds and maybe even thousands of articles and blog posts have been written decrying (or in some cases celebrating) these trolls’ exploits. Despite the fact that the trolls in question embraced a very specific subcultural understanding of the term, “trolling” became the media’s go-to descriptor for all problematic online behaviors, most notably cyberbullying.

For subcultural trolls, this framing was all wrong. The lament that “that’s not trolling” (a sentiment echoed in this Vice article) has become a common refrain within the ranks of self-identifying trolls, who take great offense to what they see as the mainstream media’s bastardization of “their” term.

And yes, it’s true; in a way, the public, not to mention the media, most certainly has adopted “their” term. But the current popular understanding of the term isn’t wrong. If anything, it’s a return to the original, looser definition—the idea that trolling is trolling if you feel you’ve been trolled.

But describing all problematic online behaviors as trolling and all online aggressors as trolls is a bad idea. Not because there is only one “correct” way to troll, as some trolls might insist, but because using the term as a stand-in for everything terrible online is imprecise, unhelpful, and—most importantly—tends to obscure the underlying problem of offline bigotry and aggression.

When deployed as an umbrella category, the term "trolling" implies that all instances of trolling share some basic definitional characteristic. If it didn’t, the behavior wouldn’t qualify as trolling. This is a difficult claim to make even when describing the (relatively) narrow category of subcultural trolling, which can run the gamut from harmless redirects to harassing the friends and family of dead teenagers.

When you’re dealing with a behavioral category that essentially exists in the eye of the beholder, it is even more difficult to establish any coherent unifying characteristic—other than the linguistic framing, of course, which is about as circular as an argument can get (i.e., it’s trolling because we’re calling it trolling).

In a similar vein, the push to describe all aggressive or otherwise annoying Internet users as trolls makes it nearly impossible to say anything meaningful about the group. Not that that has stopped people from trying; I cannot count the number of times I have been approached by journalists wanting to know what motivates trolls.

In a similar vein, the push to describe all aggressive or otherwise annoying Internet users as trolls makes it nearly impossible to say anything meaningful about the group. Not that that has stopped people from trying; I cannot count the number of times I have been approached by journalists wanting to know what motivates trolls.

Given that “troll” in this context often just means “a person who says disruptive things online,” I’m reluctant to make any blanket statements about these so-called trolls. Some people might be in it for the lulz, which is a discussion unto itself (I have grown increasingly wary of the assertion that anything can be done “just” for lulz—a conversation I explore in my ethnographic research on subcultural trolling). Some might be trying to bait their opponent into making an incriminating or otherwise embarrassing statement. Some might genuinely care about a particular issue, and are raising points they think need addressing. Some are posting whatever thing because they are, as people, very stupid, or very mean, or both. Questioning why these so-called trolls do what they do is like trying to psychologically profile an upstairs neighbor you’ve never met, but who you sometimes hear bouncing a basketball.

Ultimately, however, the biggest problem with the vague catch-all term “troll” is that it imposes an artificial boundary between so-called trolls (whatever that even means) and “regular” Internet users. This makes it seem as if the only people engaging in nasty behavior is this one, apparently unified group of malcontents—and that is simply false. Nasty behavior online can come from anyone: your neighbor, your kids’ high school classmates, your colleague at work, yourself in a bad mood.

Consequently, lumping “trolls” into one discrete group is not only not helpful; it’s harmful. If there really were some clear-cut division between troll and not-troll, all we’d need to do to solve the very real problem of online harassment and bigotry is ban the trolls—and then boom, suddenly we’d have a fair and equitable Internet. Right?

Not quite. Because the fact is, the division between trolls and regular Internet users is hardly clear cut. Compartmentalizing bigoted speech and behavior within some poorly-defined online non-category—“trolling”—that somehow manages to subsume every unpleasant interaction on the internet while establishing a clear demarcation between the “them” who trolls and the “us” who does not only obscures that fact, and precludes serious conversations about systemic harassment and bigotry. If it’s all the trolls’ fault, in other words, if they are the clearly aberrant bad guys, then we don’t have to think about how our actions feed into and are fed by the same prejudices that give rise to these kinds of aggressive behaviors—namely racism, classism, sexism, and trans- and homophobia, to name a few.

Not quite. Because the fact is, the division between trolls and regular Internet users is hardly clear cut. Compartmentalizing bigoted speech and behavior within some poorly-defined online non-category—“trolling”—that somehow manages to subsume every unpleasant interaction on the internet while establishing a clear demarcation between the “them” who trolls and the “us” who does not only obscures that fact, and precludes serious conversations about systemic harassment and bigotry. If it’s all the trolls’ fault, in other words, if they are the clearly aberrant bad guys, then we don’t have to think about how our actions feed into and are fed by the same prejudices that give rise to these kinds of aggressive behaviors—namely racism, classism, sexism, and trans- and homophobia, to name a few.

So what can be done about the so-called troll problem if we can’t even describe it? For starters, we could replace the vague omni-category of “trolling” with a more precise framing—“online aggression,” for example. I don’t mean to simply advocate a simple term swap. Particularly when used by the mainstream media, “troll” has a tendency to obscure the specific details of whatever case. As discussed above, it implies that the behavior in question meets some basic categorical criteria, which more often than not results in lots of unverifiable assumptions about the alleged troll’s motives.

“Online aggression,” in contrast, demands additional detail (what kind? how? where?), thereby discouraging sloppy reporting. If a news account is to make any sense, the person recounting whatever event occurred would be forced to specify the nature and scope of this specific instance of aggression, on what platform the interaction took place, who exactly was targeted, and what resulted from the interaction. This would be far more helpful than simply relying on the term “troll” to do all the work.

I am not suggesting that the term trolling should be eradicated. We can, and absolutely should, still talk about the trolls who self-identify as trolls and who claim to be motivated solely by lulz. It’s just that these behaviors should be regarded as a subset of online aggression, rather than the category itself. What we want are conversations that aren’t doomed to fail before they even start; we need actionable, targeted solutions to the problem of online aggression. And right now, using the label of “trolling” as an online behavioral catch-all just gets in the way.

Whitney Phillips is a media and Internet studies scholar who received her Ph.D. from the University of Oregon in 2012. Her work has appeared in journals such as Television and New Media and First Monday, and she has been interviewed or featured in the Atlantic, Fast Company, and NBC News. She is currently revising her dissertation—which focuses on subcultural trolling—for publication.

Illustration by Jason Reed