How many Twitter accounts does it take to create a fake narrative? Thousands? Tens of thousands? And would you even know it if it were happening?

As it turns out over the last year, just 200 Twitter accounts were responsible for either generating or sharing 140 million different tweets devoted to pushing out disinformation related to the frequency and effect of voter fraud.

This was the finding of a report produced by a volunteer network of technologists, artists, and academics including the San Diego Supercomputing Center’s Data Science Hub at University of California San Diego, and IV.ai.

The project was organized by Guardians.ai, with Zach Verdin leading the research in the report, alongside Brett Horvath and Alicia Serrani.

What the trio found was a complex web of Twitter accounts that is “not only growing at an accelerating rate but also coordinating with effective tactics that appear to bypass many of the detection methods of existing disinformation research.”

• Where do I vote?

— Headsnipe011 (@Headsnipe011) November 6, 2018

• How late are the polls open?

• How do I report voter fraud and intimidation?

• Where can I find election results?

https://twitter.com/winegirl73/status/824248158112927744

https://twitter.com/zeusFanHouse/status/1054168514263576576

This network uses coordinated sprees of posting memes and reposts of far-right news stories, often at seemingly random times, to drive the conversation over a topic that’s sure to dominate news coverage if Democrats take the House of Representatives.

It’s also a narrative President Donald Trump is happy to have at the forefront of the national conversation.

Law Enforcement has been strongly notified to watch closely for any ILLEGAL VOTING which may take place in Tuesday’s Election (or Early Voting). Anyone caught will be subject to the Maximum Criminal Penalties allowed by law. Thank you!

— Donald J. Trump (@realDonaldTrump) November 5, 2018

Are these accounts real or bots? Russian or American? Is this a well-funded black ops campaign, or just a bunch of fervent Trump supporters who accidentally racked up nine figures of engagements? Either way, all the accounts share some links.

“[We] found that there was a group of accounts consistently tweeting and retweeting narratives about #VoterFraud. We also noticed that many of these accounts seemed to be connected because they a) follow each other, b) mention or retweet each other often, and c) talk about many of the same topics. There are also aesthetic similarities between many of these accounts. For instance, this network of accounts uses lots of emojis and similar hashtags in their bios and tweets.

Verdin and his co-workers make it clear in the report that they don’t know exactly what it is and may never know.

But as Verdin told the Daily Dot in an interview, it’s a problem that Twitter can’t wrap its head around, and one that could have profound implications for the conversation that follows the 2018 midterms.

The following conversation has been lightly edited for clarity. You can see the accounts here.

What made you start doing the initial searching for the #VoterFraud hashtag?

My partners Brett Horvath, Alicia Serrani, and I were talking about potential issues we wanted to track related to the midterms. Brett and Alicia had the foresight to look at mentions of #VoterFraud.

When did your intuition kick in that something strange was going on?

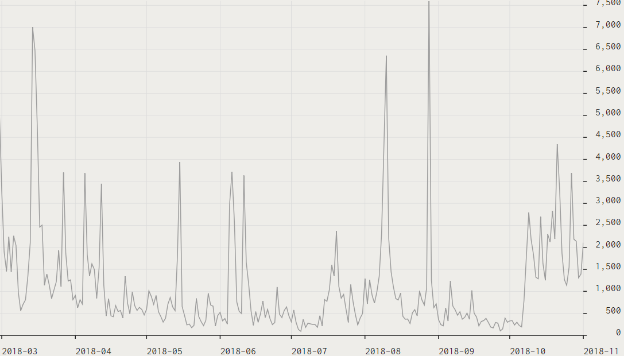

The first moment that we realized something strange was happening was when we looked at spikes of mentions of the hashtag #VoterFraud over the last 12 months. Mentions of #VoterFraud activity consistently spiked up to 5,000-7k mentions, creating a pattern of activity that resembled a heartbeat in an EKG machine.

The second moment was when we looked at mentions of #VoterFraud over 36 months and saw a dramatic spike in mentions right before the 2016 election.

And the third moment was when we looked into the individual profiles pushing #VoterFraud and discovered the surge pattern, which we discussed in our report.

A lot of the grunt work of tracking coordinated trends on Twitter is very data heavy and hard to parse if you’re not a data wonk. Can you boil down in a few sentences what’s going on here, and why it matters?

We found a network of 200 influencers drive 140 million posts over the course of a year amplifying narratives about voter fraud, election integrity, and other divisive conspiracies.

Could the spikes in tweets about voter fraud simply be a natural reaction to the topic being such a moral panic around election time?

Some of the spikes in activity were associated with related news, but many of the spikes happened on days when there was no relevant news story. The fact that the activity looked the same for both was strange to us.

We are not claiming that there have been no documented cases of voter fraud. However, we do investigate whether the frequency at which voter fraud actually occurs warrants the intensity and urgency of stories that we see on Twitter.

https://twitter.com/2018MAGAMidTrmT/status/1028446875991646208

United States Attorney's Office Continues to Protect the Right to Vote and Prosecute Voter Fraud in Upcoming Electionshttps://t.co/puzZohbCBU

— Headsnipe011 (@Headsnipe011) November 2, 2018

https://twitter.com/Golfinggary5222/status/982297279301287936

This feels like someone laying the groundwork to explain away a Democratic victory by writing it off to voter fraud. Is that your sense as well?

That is definitely one potential outcome of the activity of these accounts. We’re also wondering whether the activity is meant to get into position to frame the outcome of the results, no matter who wins.

Our hope is to provide a window into how these groups of accounts operate so that people can come to their own conclusions about what might be happening here, whether it be coordinated influence campaigns or opportunistic amplification of individual tweets.

Who is the audience for these tweets? Most people aren’t on Twitter, and these networks of Trump supporters (real or fake) tend to be very insular, with very little breaking through to the mainstream. Is it meant for that circle, or someone else?

We’ve been able to confirm that some of these accounts are people who have no idea how they got so many mentions and followers so quickly.

To us, that indicates a potential change in tactics from concentrated botnets (who may be loud but insular) to coordination and influence that weaves itself into a conversation among real humans.

Also, we’ve seen a seen a mirroring of what these accounts have been talking about for more than three years begin to pop up more and more in the mainstream news conversation. We can’t identify the cause and effect relationship, but a regular, consistent amplification of these narratives is certainly affecting what people see when they go to Twitter and look for conversation around these topics.

Democrat Party Leader Busted Funding Voter Fraud Ring – Young Conservatives. Because you’ll never hear this on the news…. #VoterFraud https://t.co/4brP2wde4d

— John Foster (@jhftennis) November 1, 2018

https://twitter.com/flytrap2001/status/1056330670115643393

https://twitter.com/Matthewcogdeill/status/1058843385585434624

You say upfront in your report that you were left with more questions than answers, which seems the norm with these disinformation campaigns. What’s the next step for you?

We are going to build on this initial research so that we can help the public better understand how the mechanics of coordination and influence happen online.

How on earth does Twitter combat this problem?

Working closely with researchers, technologists, and artists that are exploring these topics and providing interfaces to see how influence happens online, is definitely a start.

You can see the research efforts here.