Reddit announced on Thursday that deepfakes that have a “malicious” intent are now prohibited from the site.

In an attempt to minimize disinformation prior to the 2020 election, the company updated its impersonation policy. The policy change intends to limit fake accounts that imitate real people like journalists and politicians who have a public impact.

But in revamping the policy, the site is also taking efforts to stop deepfakes to help prevent confusion. Deepfakes are videos that superimpose someone else’s words and face to make it seem like they are saying and doing something they are not.

“We’ve been doing significant work on site integrity operations as we move into 2020 to ensure that we have the appropriate rules and processes in place to handle bad actors who are trying to manipulate Reddit, particularly around issues of great public significance, like elections,” the announcement states.

Politicians are not the only figures targeted by deepfakes on Reddit.

In 2018, the company first limited deepfake content as users began posting porn with celebrities’ faces. The faces of Emma Watson and Mila Kunis were just some of the fake porn videos taken down from the site.

Other than that spike in involuntary porn, this type of content is not too popular yet.

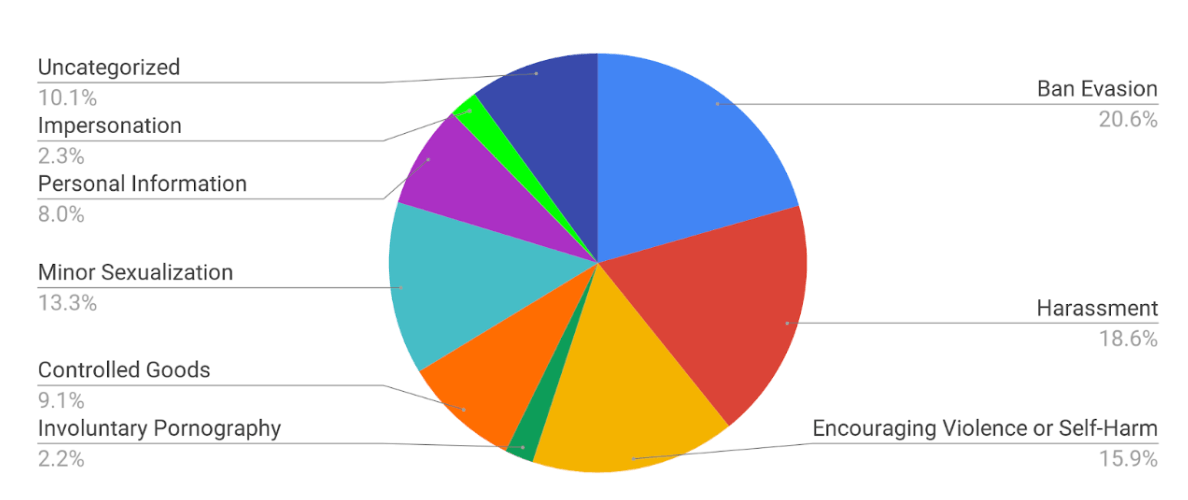

Reddit noted in the announcement that in 2018, impersonation was 2.3% of content that violated policy.

However, the site acknowledged that the task of monitoring deepfake content is not black and white.

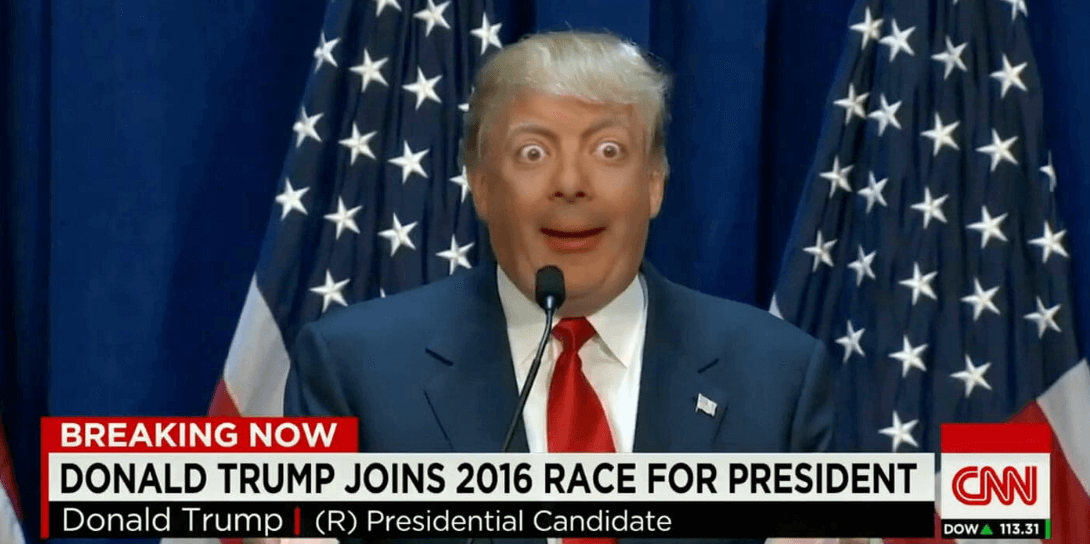

Not all deepfake content is as easily identifiable as something like, say President Donald Trump with Mr. Bean’s eyes.

Additionally, Reddit does not intend on banning all deepfake content—just that which is deemed “malicious.”

“This doesn’t apply to all deepfake or manipulated content—just that which is actually misleading in a malicious way. Because believe you me, we like seeing Nic Cage in unexpected places just as much as you do,” Reddit added in the announcement.

The move comes just days after Facebook banned some deepfake content.

READ MORE: