Google may still have some tinkering to do when it comes to misleading search results for Nazis, Adolf Hitler, and the Ku Klux Klan.

On Thursday night, writer Sarah Kendzior shared a series of phone screenshots of Google search suggestions for the terms, “Nazis are,” “Adolf Hitler is,” and “the KKK is.” Yes, you know where this is going.

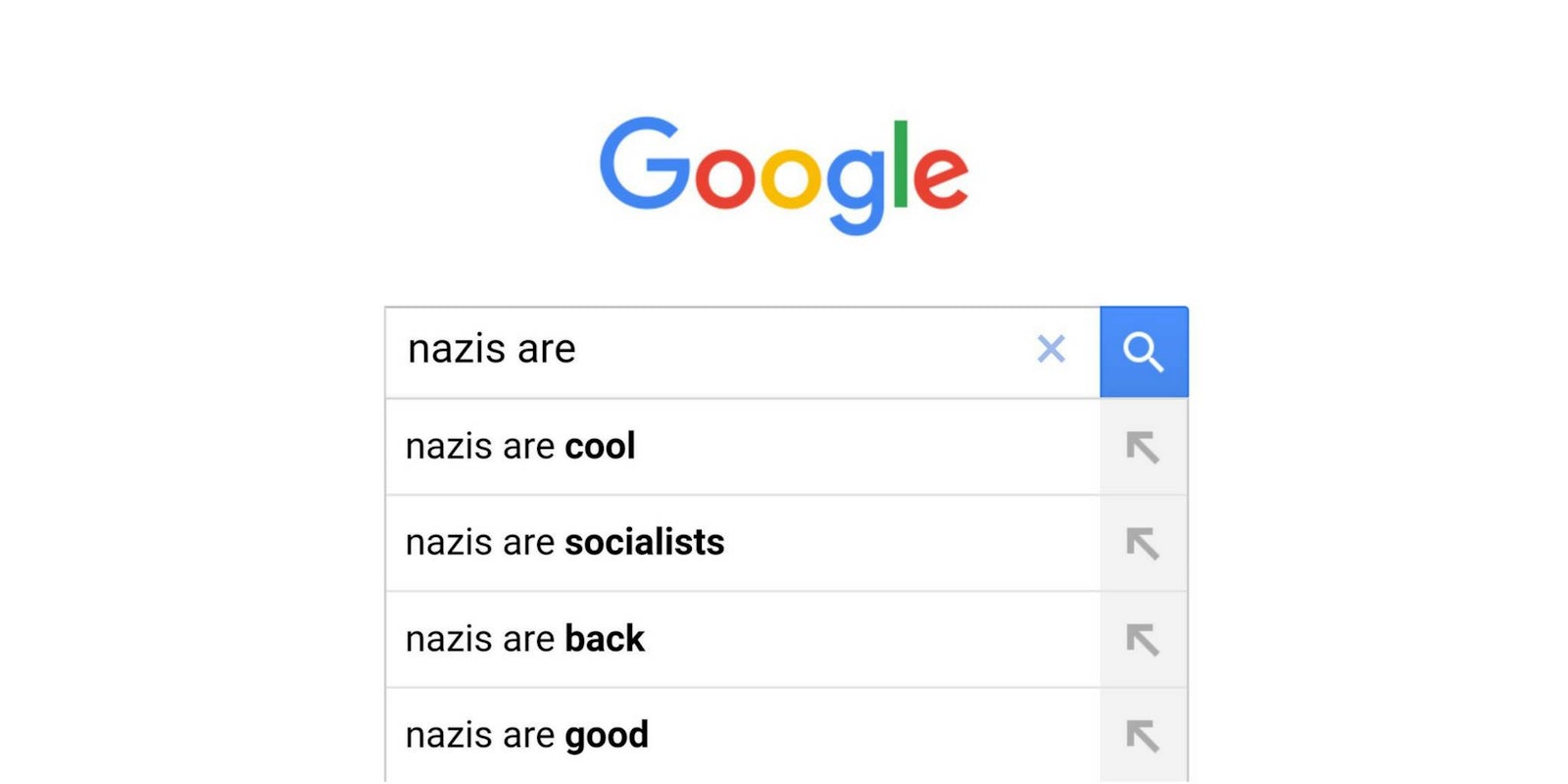

Google autocomplete results for "Nazis are". A friend showed me this today; I tried on my own phone and got same result. pic.twitter.com/SPmDrFaRqZ

— Sarah Kendzior (@sarahkendzior) January 13, 2017

Seriously Google WTF? pic.twitter.com/1hQhgAq4t5

— Sarah Kendzior (@sarahkendzior) January 13, 2017

Leaving the search terms open-ended, Google gave Kendzior suggestions like, “nazis are cool,” “Adolf Hitler is my idol,” and “the KKK is not racist.”

Other additions to Kendzior’s Twitter thread included Google suggestions for Holocaust debunking theories.

— Kenny (Taylor’s Version) 🇺🇦🌻 (@ProfessorShakey) January 13, 2017

Got same top three as you. WTF… pic.twitter.com/KwRLYfMFLm

— Sarah Kendzior (@sarahkendzior) January 13, 2017

Our own swing at the Google searches (through the Google Chrome iPhone app on incognito mode) yield the same answers, save for a few additions: “nazis are evil,” Hitler is “stressed out” and “appointed chancellor of Germany,” and the KKK is “dead” and “not Christian.”

While “Holocaust,” doesn’t yield “is real” nor “is a myth,” it does lead to the suggestions “is” and “Israel.”

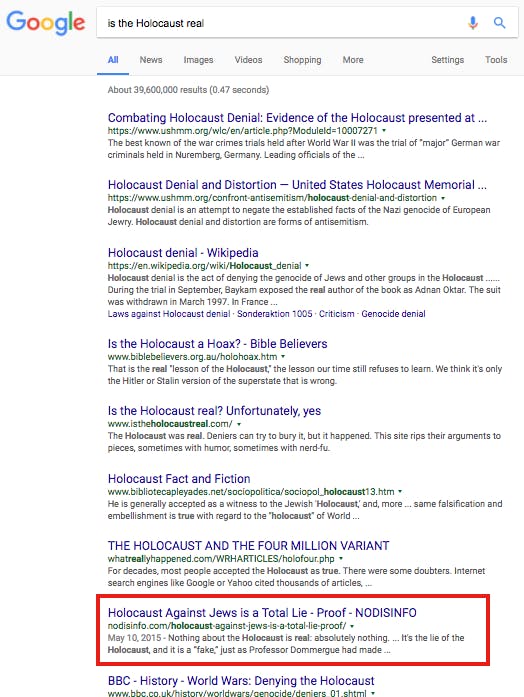

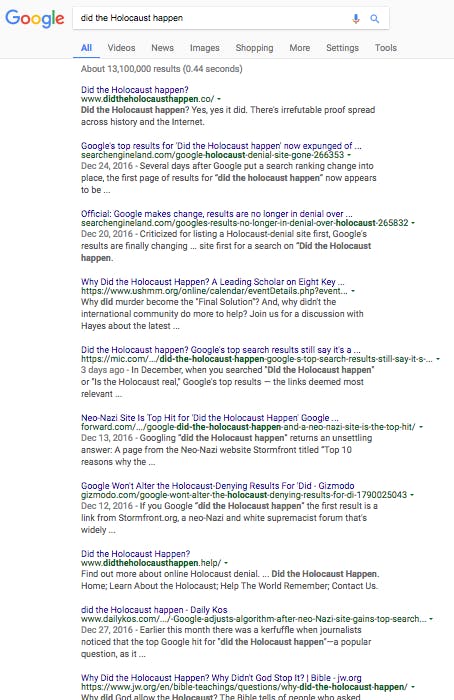

This leading search result discovery comes nearly a month after Google’s decision to remove Holocaust-denying websites from appearing in top search results for the query, “did the Holocaust happen.” The company originally resisted addressing the issue in early December and instead issued a statement saying that Google did not endorse the views of “hate sites,” but the company reversed the decision two weeks later.

Their solution hasn’t been entirely effective, however. According to an article from Mic published on Jan. 10, the first results for their own searches for “did the Holocaust happen” and “is the Holocaust real” are Holocaust-denying articles. These searches were done through Google Chrome’s incognito mode, too.

Our Google search for “is the Holocaust real” reveals the same Holocaust-denying article, though it is the eighth search result as opposed to the first from Mic’s search. The first page of search results for “did the Holocaust happen” reveal no Holocaust-denying sites or articles.

Kendzior isn’t the first person to point out Google’s leading search suggestions. As the Guardian pointed out, the terms “climate change is” and “Sandy Hook” yield conspiracy suggestions.

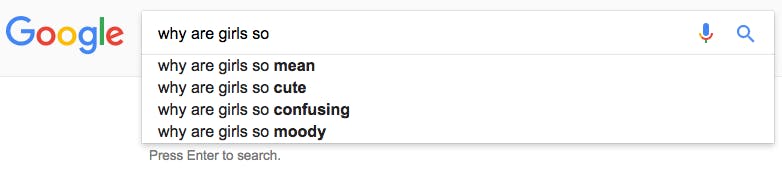

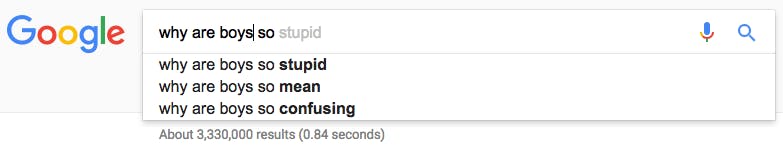

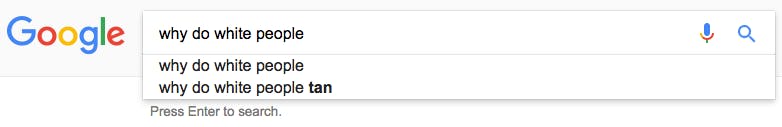

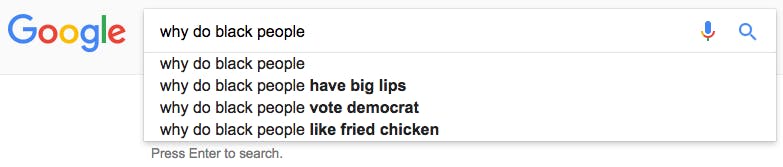

Similarly, searches for terms such as “why are boys/girls so,” “why do black/white people,” and myriad other terms on race and gender lead to stereotypical autocomplete suggestions.

According to Robert Epstein, a research psychologist at the American Institute for Behavioral Research and Technology, Google’s search results and autocomplete predictions have the power to shift opinions and even influence elections.

“We know that if there’s a negative autocomplete suggestion in the list, it will draw somewhere between five and 15 times as many clicks as a neutral suggestion,” Epstein told the Guardian. “If you omit negatives for one perspective, one hotel chain or one candidate, you have a heck of a lot of people who are going to see only positive things for whatever the perspective you are supporting.”

This theory proved popular during the 2016 election. Epstein himself conducted experiments in which he found that Google suppressed negative autocomplete search results for Democratic presidential candidate Hillary Clinton. Though Epstein published his findings through a Russian news agency in September, news site SourceFed alleged similar findings in June. Even President-elect Donald Trump peddled the theory as fact.

At the time of SourceFed’s investigation, the product management director for Google search, Tamar Yehoshua, published a post on the company’s blog regarding the search autocomplete feature and possible covert “Clinton crime” coverup.

Yehoshua wrote that the autocomplete algorithm was designed to avoid completing a search for a person’s name with offensive terms.

“Autocomplete isn’t an exact science, and the output of the prediction algorithms changes frequently. Predictions are produced based on a number of factors including the popularity and freshness of search terms,” Yehoshua wrote. “Given that search activity varies, the terms that appear in Autocomplete for you may change over time.”

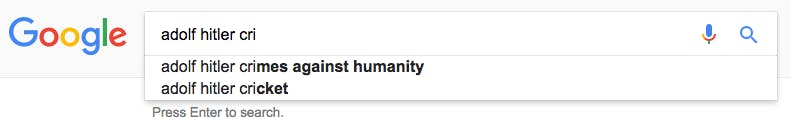

Yehoshua’s response indicated that, ultimately, these autocompleted predictions are indicative of Google search’s users themselves. As far as crime coverup goes, however, the Daily Dot found that most searches for a celebrity or politician’s name followed by “cri” did not autocomplete with the suggestion “crime,” save for a search of “pharma bro” Martin Shkreli.

At least Google’s autocomplete prediction exempts Hitler himself from the courtesy, too.